Four decades ago, philosopher Hilary Putnam described a famous and frightening thought experiment: A “brain in a vat,” snatched from its human cranium by a mad scientist who then stimulates nerve endings to create the illusion that nothing has changed. The disembodied consciousness lives on in a state that seems straight out of The Matrix, seeing and feeling a world that doesn’t exist.

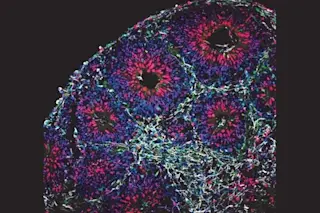

Though the idea was pure science fiction in 1981, it’s not so far-fetched today. Over the past decade, neuroscientists have begun using stem cell cultures to grow artificial brains, called brain organoids — handy alternatives that sidestep the practical and ethical challenges of studying the real thing.

As these models improve (they’re currently pea-sized simplifications), they could lead to breakthroughs in the diagnosis and treatment of neurological disease. Organoids have already enhanced our understanding of conditions like autism, schizophrenia, even Zika virus, and hold the potential to ...