If you don’t have time to sit and read a physical book, is listening to the audio version considered cheating? To some hardcore book nerds, it could be. But new evidence suggests that, to our brains, reading and hearing a story might not be so different.

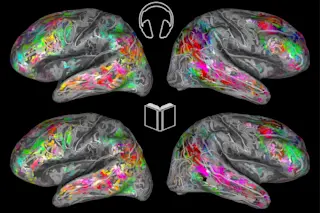

In a study published in the Journal of Neuroscience, researchers from the Gallant Lab at UC Berkeley scanned the brains of nine participants while they read and listened to a series of tales from “The Moth Radio Hour.” After analyzing how each word was processed in the the brain’s cortex, they created maps of the participants’ brains, noting the different areas helped interpret the meaning of each word.

They mapped out the results in an interactive diagram, which is due to be published on the Gallant Lab website this week.

Looking at the brain scans and data analysis, the researchers saw that the stories stimulated the same cognitive and emotional areas, regardless of their medium. It’s adding to our understanding of how our brains give semantic meaning to the squiggly letters and bursts of sound that make up our communication.

This is Your Brain on Words

In 2016, researchers at the Gallant Lab published their first interactive map of a person’s brain after they listened to two hours of stories from “The Moth.” It’s a vibrant, rainbow-hued diagram of a brain divided into about 60,000 parts, called voxels.

Coding and analyzing the data in each voxel helped researchers visualize which regions of the brain process certain kinds of words. One section responded to terms like “father,” “refused,” and “remarried” — social words that describe dramatic events, people or time.

But the most recent study, which compared brains when they were listening and reading, showed that words tend to activate the same brain regions with the same intensity, regardless of input.

It was a finding that surprised Fatma Deniz, a postdoctoral researcher at the Gallant Lab and lead author of the study. The subject’s brains were creating meaning from the words in the same way, regardless if they were listening or reading. In fact, the brain maps for both auditory and visual input they created from the data looked nearly identical.

Their work is part of a broader effort to understand which regions of our brains help give meaning to certain types of words.

More Work Ahead

Deniz wants to take the experiment even further by testing on a broader range of subjects. She wants to include participants who don’t speak English, speak multiple languages or have auditory processing disorders or dyslexia. Finding out exactly how the brain makes meaning from words could fuel experiments for years.

“This can go forever … it’s an awesome question,” she says. “It would be amazing to understand all aspects of it. And that would be the end goal.”

For now, Deniz says the results of this study could make a case for people who struggle with reading or listening to have access to stories in different formats. Kids who grow up with dyslexia, for example, might benefit from audiobooks that are readily available in the classroom.

And if listening to audiobooks is your preferred method of storytelling, you might not be cheating at all. In fact, it seems you’re not losing anything by downloading books on your phone — you’re just being a smart reader, er, listener.