This story was originally published in our May/June 2023 issue as "Making Waves." Click here to subscribe to read more stories like this one.

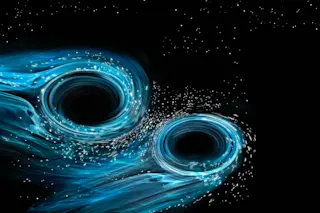

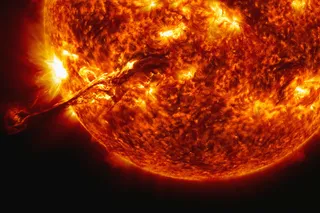

On Feb. 11, 2016, scientists at the Laser Interferometer Gravitational-wave Observatory (LIGO) unveiled the first direct detection of gravitational waves — produced, in this case, by the merger of two black holes, 1.3 billion light-years away. The announcement (and accompanying scientific paper) came 100 years after Albert Einstein’s 1916 prediction that such waves would be unleashed during violent events in the universe. Based on his new theory of general relativity, Einstein concluded that gravitational waves would form when massive objects accelerated through spacetime, creating outward spreading ripples as they moved, just like the wake that follows a powerboat speeding across once-tranquil water.

Three physicists — Barry Barish, Kip Thorne, and Rainer Weiss — received the 2017 Nobel Prize in Physics for their “decisive contributions to the ...