Age 116, Kane Tanaka of Japan was recently crowned the oldest person on Earth. She’s six years shy of the longest human life on record: 122 years and 164 days reached by a French woman, Jeanne Louise Calment, before her death in 1997.

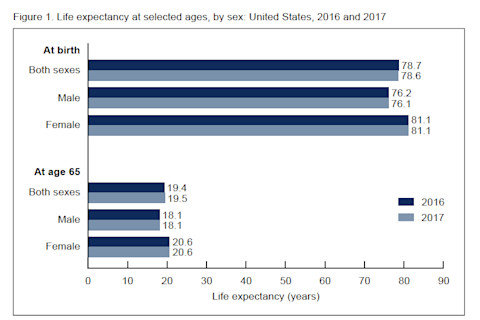

While turning 100 can get you a shout out on the Today show, there’s nothing newsworthy about surviving into your 70s. That’s just expected based on life expectancy. In the United States, on average, newborn males live to 76 years and females to 81, according to the latest statistics from the National Center for Health Statistics. For most of the past century life expectancy has been increasing, thanks to improved healthcare, hygiene and nutrition.

But what about before the advent of modernity, say, 30,000 years before? It’s a question many scientists have tried to answer — whether ancient Homo sapiensperished in their 30s or lived on into the autumn of their lives.

Just how old is old age?

There’s little doubt among anthropologists that the actual biological capacity to reach old age goes way back: To the dawn of our species some 300,000 years ago, or even earlier, to the origins of the genus Homo roughly 2 million years ago. We’ve had the ability to survive into our 70s and 80s for a long time.

What’s unclear is how often this potential was realized. Researchers still aren’t sure how common it would have been to see octogenarians in Stone Age societies.

Hunter-Gatherer Grandparents

One way anthropologists have tried to answer the question is by looking at mortality data from present-day people with more traditional lifestyles. In particular they’ve studied foragers and subsistence farmers with minimal exposure to modern medicine or technology. The idea is that such groups have the habits and hazards most similar to our ancient ancestors.

For traditional societies life expectancy from birth is 3-4 decades, compared to 7-8 decades for contemporary, industrialized societies. But, this may give the false impression that hunter-gatherers are largely keeling over in their 30s. Not so.

This chart shows the number of years someone can expect to live at birth and at age 65. Notice the increase in life expectancy for those who make it to 65. (Credit: NCHS)

NCHS

This chart shows the number of years someone can expect to live at birth and at age 65. Notice the increase in life expectancy for those who make it to 65. (Credit: NCHS)

The value includes deaths during infancy and childhood, the riskiest phase of life for most of human history, and still today in many parts of the world. A 2013 reviewfound that across 18 recent hunter-gatherer groups, one quarter of children die before their first birthdays and nearly half (48 percent) do before age 15. The values were nearly identical to those from 43 surveyed historical civilizations, including Classical Rome, medieval Japan and Renaissance Europe. This means, without modern medicine and technology, babies have only had a 50-50 shot of surviving to age 15, in all the times and places from which we have historical or ethnographic data. For comparison, in modern Western countries today, death rates are around 1 percent for children (age 0-15) and 0.1 percent for infants.

So, the abundance of early demises brings down the average lifespan from birth in traditional societies.

Other longevity measures provide a better sense of a group’s demographic makeup. Take instead life expectancy only for individuals who make it to their 15th birthday. Of this subset, nearly two thirds eventually reach their late 60s or 70s, according to a 2007 analysis of 12 traditional societies.

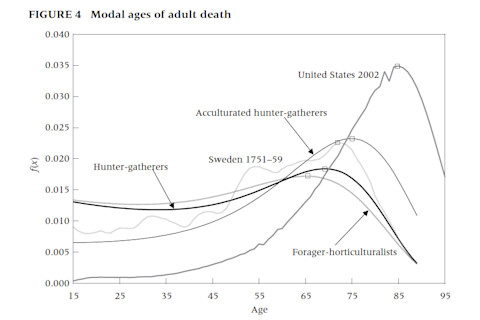

Considering those age 15 and above, the study also calculated the age at which most people die, or the modal age at death (yes, the stat from “mean, median and mode” that you never thought you’d use in fourth grade). This value for foragers and traditional farmers was 72, equivalent to the age most Swedish people died in the mid-1700s. Modal age of death for the United States in 2002 was 85.

As the study authors put it, “The effective end of the human life course under traditional conditions seems to be just after age 70 years.” Anyone who makes it through childhood has a good shot of becoming an elder.

This graph compares when individuals from various societies are more likely to die. (Credit: Michael Gurven and Hillard Kaplan)

Michael Gurven and Hillard Kaplan

This graph compares when individuals from various societies are more likely to die. (Credit: Michael Gurven and Hillard Kaplan)

Boney Birth Certificates

Of course no one today is experiencing conditions identical to those of our Stone Age predecessors. Therefore, to understand the antiquity of old age, other anthropologists have directly studied human ancestors — or at least the bones and teeth they left behind.

Researchers will attempt to assign an age-at-death to the remains of ancient humans in order to estimate the prevalence of elders in a population. But there’s two major problems with this approach. First, the collection of skeletons needs to be representative of the full group. Not just a random bunch of people who died and were preserved for 20,000-plus years, but an authentic snapshot of demise rates across different ages. To address this issue, researchers use statistical methods to check if they have enough skeletal remains to make meaningful inferences.

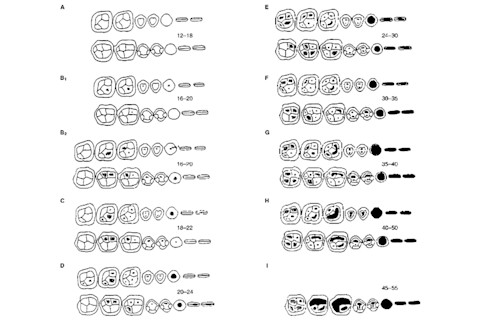

The other problem is that it’s impossible to precisely determine the age of an adult skeleton. As children are growing, their bones fuse and teeth develop along a predictable calendar. The last definitive age indicator occurs in the late teens, when the third molars, or wisdom teeth, emerge. After that estimates are fuzzy, and based on the amount of wear and tear that accumulates on bones and teeth with each passing year. Of course, diet and activity levels influence this metric, so the method is hardly precise. It only places people within a decade or so of their actual age (It’s totally unrealistic when they age adult skeletons down to the year on CSI or Bones).

A chart used to pair dental wear with rough age brackets. (Credit: Lovejoy (1985))

Lovejoy (1985

A chart used to pair dental wear with rough age brackets. (Credit: Lovejoy (1985))

Given this limitation, anthropologists have simply identified all the skeletons with third molars and then divided them into categories of “young adult” (20-40 years) and “old adult” (over 40).

This approach was first applied to fossil teeth from 768 individuals including Paleolithic European Homo sapiens, Neanderthals and earlier species of human ancestors. Old adults were present across all time periods, but were by far most common among remains from European H. sapiens of the past 50,000 years, suggesting more than a 5-fold increase in elderly individuals. Modern humans, by this measure, had many more individuals that went on to reach old age than our evolutionary cousins.

A more recent study using this method, compared Stone Age Homo sapiens and Neanderthals to archaeological skeletons from the past 10,000 years as well as historical and ethnographic death data. The proportion of older adults from the Stone Age was slim compared to that from people of the past 10,000 years. Adult longevity, at least as measured over thousands of years, has actually gone up.

Other scientists disagree with the implications of these studies, though, arguing that the approach does not provide an honest picture of elders’ presence in the past.

Regardless, it’s clear from fossils and traditional societies today that even without modern medicine or technology humans can make it to their geriatric years. Old age is nothing new.