In a world where wellness has become paramount, it's easy to overlook a quiet but pervasive health concern—vitamin D deficiency. Vitamin D deficiency affects over 1 billion people worldwide.Often referred to as the "sunshine vitamin," vitamin D plays a pivotal role in our overall well-being. Yet, a growing number of individuals find themselves grappling with insufficient levels of this vital nutrient.

In this article, we unveil subtle and not-so-subtle signs that may indicate a deficiency, shedding light on the importance of recognizing and addressing this often-overlooked health issue. Discover how a deficiency in this essential vitamin can impact your life and why it's crucial to heed the warnings your body may be sending.

What Is Vitamin D Deficiency?

Vitamin D deficiency occurs when the body does not get enough vitamin D through diet or sun exposure, or if the kidneys cannot effectively convert the vitamin to its active form. It's more likely in those with diets lacking in vitamin D, such as individuals with milk allergies or lactose intolerance, and those following ovo-vegetarian or vegan diets.

Read More: Full Vitamin D Deficiency Guide

Symptoms and Signs of Vitamin D Deficiency

Vitamin D deficiency can have a profound impact on both physical and mental health. It's important to recognize these signs early, as Vitamin D plays a crucial role in numerous bodily functions, from bone health to immune response.

Common Physical Symptoms

Vitamin D deficiency, despite being a preventable condition, remains a significant global health issue. This deficiency can lead to a variety of physical symptoms of Vitamin D deficiency, each impacting the body in different ways:

1. Obesity

Vitamin D deficiency may lead to a higher chance of gaining weight and difficulty in maintaining a healthy weight. Vitamin D plays a role in regulating body fat and metabolism. When the body has insufficient Vitamin D, it may disrupt the metabolic processes, potentially leading to weight gain and obesity.

2. Diabetes

A deficiency in Vitamin D is linked to an increased risk of developing type 2 diabetes. This is due to the role of Vitamin D in insulin production and regulation. Without adequate vitamin D, the body may struggle to manage blood sugar levels effectively, increasing the risk of diabetes.

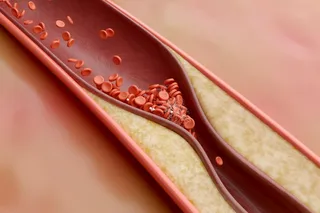

3. Hypertension (High Blood Pressure)

Low levels of Vitamin D can contribute to hypertension. Vitamin D is essential for maintaining healthy blood vessels and regulating blood pressure. A deficiency can lead to stiffening and narrowing of blood vessels, increasing the risk of high blood pressure.

4. Fibromyalgia

Fibromyalgia, characterized by widespread musculoskeletal pain, may be associated with low levels of vitamin D. This vitamin plays a role in bone and muscle health, and its deficiency can contribute to the chronic pain and tenderness experienced in fibromyalgia. However, the role of vitamin D in causing fibromyalgia and its effectiveness as a treatment after supplementation are still not fully proven and understood.

5. Chronic Fatigue Syndrome

Persistent fatigue that isn't relieved by rest can be a sign of vitamin D deficiency. Vitamin D is vital for energy production and muscle function. Insufficient levels can lead to a general feeling of tiredness and lack of energy, characteristic of chronic fatigue syndrome.

6. Osteoporosis

Vitamin D is crucial for bone health, and its deficiency can lead to osteoporosis, a condition where bones become weak and brittle. This increases the risk of fractures, particularly in older adults.

7. Neurodegenerative Diseases

Low levels of vitamin D3 have been linked to neurodegenerative diseases like Alzheimer’s. Vitamin D is believed to play a role in brain health, and its deficiency might contribute to the development and progression of such diseases.

8. Increased Risk of Certain Cancers

Deficiencies in vitamin D3 are potentially linked to a higher risk of developing certain types of cancer, such as breast, prostate, and colon cancers. Vitamin D plays a role in cell growth regulation and immune function, which can influence cancer risk.

9. Heart Disease and Stroke

Vitamin D deficiency may elevate the risk of cardiovascular diseases, including heart disease and stroke. Vitamin D is important for heart and blood vessel health, and insufficient levels can lead to cardiovascular complications.

10. Autoimmune Diseases

There is evidence suggesting that Vitamin D3 deficiency might contribute to the development of autoimmune disorders. Vitamin D plays a key role in regulating the immune system, and its deficiency may lead to an increased risk of autoimmune diseases.

11. Periodontal Disease

A higher likelihood of developing gum diseases has been associated with Vitamin D deficiency. This vitamin is important for oral health, as it helps in maintaining the health of gums and teeth.

12. Muscle Weakness and Pain

Reduced neuromuscular function, leading to muscle weakness and pain, can be a symptom of vitamin D deficiency. Vitamin D is essential for muscle strength and coordination, and low levels can result in muscle discomfort and weakness.

Psychological Effects

In addition to the physical signs, there can also be psychological symptoms of vitamin D deficiency, such as mood regulation, depression, and anxiety.

13. Mood Regulation

Vitamin D receptors are present in various areas of the brain that play a key role in regulating mood. This suggests that Vitamin D might have a significant influence on mental health. The brain's ability to manage emotions and mood states could be impacted by vitamin D levels, indicating a potential link between this nutrient and overall mental well-being.

14. Depression

A review of 61 articles revealed that people with depression had lower levels of vitamin D compared to those without depression. This correlation suggests that vitamin D might play a role in the onset or severity of depression. However, the exact connection is not fully understood.

15. Anxiety

The presence of vitamin D receptors in brain regions associated with mood regulation is also significant in the context of anxiety disorders. Studies indicate that lower levels of vitamin D are often associated with increased symptoms of anxiety. This correlation suggests that vitamin D might be important in the development or exacerbation of anxiety disorders. The exact mechanism by which vitamin D impacts anxiety symptoms is still a subject of ongoing research, but the existing evidence points to a notable relationship between vitamin D levels and anxiety.

What Are the Causes of Vitamin D Deficiency?

There are a few different reasons why someone might be deficient in vitamin D, such as exposure to sunlight, dietary habits, skin color, underlying medical conditions, and age.

Lack of Sunlight Exposure

The most common cause is not getting enough sunlight. People who live in cold climates or who spend most of their time indoors are at a higher risk for vitamin D deficiency.

Dietary Factors

Eating habits like not eating enough fruits, vegetables, and other healthy foods can lead to vitamin D deficiency.

Skin Color

Another cause of vitamin D deficiency is having dark skin. The melanin in darker skin reduces the amount of sunlight that can be absorbed.

Medical Conditions

Certain medical conditions, such as Crohn’s disease and Celiac disease, can also lead to vitamin D deficiency.

Age

Vitamin D deficiency is also common in the elderly. This is because the body’s ability to absorb vitamin D decreases with age.

Read More: 20 Best Vitamin D Supplements

Testing and Diagnosis

Proper testing and diagnosis is crucial for maintaining overall health and wellbeing. Since there are so many factors that can contribute to deficiency, it's important to identify individuals who are most at risk of low Vitamin D and would benefit from testing.

(Credit: Babul Hosen/Shutterstock)

Babul Hosen/Shutterstock

When to Get Tested

Here are specific groups of people who should consider getting tested for vitamin D deficiency:

Those with inadequate sun exposure: This includes people living in areas with limited sunlight, those who spend little time outdoors, or individuals who consistently use sunscreen or wear clothing that limits skin exposure to the sun.

People with limited oral intake: Individuals who have a diet low in Vitamin D sources, such as fatty fish, fortified dairy products, and certain supplements, may be at risk.

Persons with impaired intestinal absorption: This group includes those with conditions like Crohn’s disease, celiac disease, and cystic fibrosis, which can affect the body's ability to absorb vitamin D from food.

Elderly individuals: Older adults produce less Vitamin D in their skin when exposed to sunlight compared to younger people.

Patients with Chronic Kidney Disease (CKD): These patients have decreased conversion of Vitamin D to its active form due to impaired renal function.

Individuals with musculoskeletal symptoms: This includes those experiencing bone pain, muscle weakness, or general myalgias, as these symptoms can be associated with Vitamin D deficiency.

People at risk of bone loss: Those with low bone mineral density, a history of low-impact fractures, or at risk of falling should be evaluated for Vitamin D deficiency to help reduce the risk of fractures.

Pregnant or lactating women: While not explicitly mentioned in the article, the role of Vitamin D is increasingly recognized as important in these groups and may be refined with ongoing research.

Understanding the Test Results

The general consensus among experts is that a vitamin D level under 20 ng/ml indicates a deficiency, and a level from 21 to 29 ng/ml suggests it's insufficient. It's recommended to maintain vitamin D levels above 30 ng/ml in both children and adults to maximize the health benefits it provides.

What’s the Best Treatment for Vitamin D Deficiency?

Treating Vitamin D deficiency typically involves a combination of dietary changes, increased sunlight exposure, and Vitamin D supplementation:

(Credit: microgen/Shutterstock)

microgen/Shutterstock

Dietary Changes

Incorporate foods rich in Vitamin D into your diet. These include fatty fish (like salmon, mackerel, and tuna), egg yolks, cheese, and fortified foods (such as certain dairy products, orange juice, soy milk, and cereals).

Sunlight Exposure

Spending time in the sun can help your body produce more Vitamin D. Aim for about 10-15 minutes of sun exposure a few times a week, depending on your skin type, the time of year, and your geographical location. Be mindful of the risk of skin damage and skin cancer from too much sun exposure.

Vitamin D Supplements

Often, dietary changes and sunlight exposure are not enough to correct a deficiency. In such cases, taking Vitamin D supplements is common. Vitamin D3 (cholecalciferol) is typically recommended as it's more effective at raising and maintaining vitamin D levels.

Choosing the Best Vitamin D Supplements

The best way to treat Vitamin D deficiency is to get more sun exposure. However, during the winter months or if you live in a place with little sun, this may not be possible. In these cases, you may need to take a Vitamin D supplement. Your doctor can help you determine the best course of treatment for your specific situation.

How Long Does It Take To Recover From Vitamin D Deficiency?

If you are deficient in Vitamin D, it may take some time to build up your levels. This is because vitamin D is stored in the body fat and it takes time for the body to use its stores.

You will likely need to take a supplement for several months before you see an improvement in your symptoms. Additionally, you may need to take a higher dose of Vitamin D than what is recommended for people who are not deficient.

Conclusion

In this exploration, we've uncovered a vital aspect of overall well-being. From subtle symptoms to more apparent health concerns, this article has illustrated the diverse ways in which a lack of this essential nutrient can affect us. Recognizing these signs is the first step towards a healthier life.

However, it's crucial to remember that self-diagnosis is not a substitute for professional medical advice. If you suspect a Vitamin D deficiency, consult a healthcare provider who can assess your specific needs and guide you toward appropriate solutions. Prioritizing your Vitamin D levels is an investment in your long-term health, ensuring that you can embrace each day with vitality and vigor.

Frequently Asked Questions About Vitamin D Deficiency

What Is Vitamin D, and Why Is It Important?

Vitamin D is a fat-soluble vitamin that plays a crucial role in maintaining bone health, supporting the immune system, regulating mood, and aiding in calcium absorption. It's often referred to as the "sunshine vitamin" because the body can produce it when the skin is exposed to sunlight.

Who Is at Risk of Vitamin D Deficiency?

People with darker skin are at higher risk of Vitamin D deficiency. Vitamin D deficiency is more prevalent in older adults. People at higher risk of deficiency include those with limited sun exposure, people with certain medical conditions (like Crohn's disease or celiac disease), and those who follow strict vegan diets.

How Can I Get Enough Vitamin D Through Sunlight?

Spending time outdoors in direct sunlight, particularly during midday, allows your skin to produce vitamin D. The amount of sun exposure needed varies based on factors like skin type, location, and time of day. Typically, 10-30 minutes of sunlight exposure to arms and legs a few times a week can suffice for many people.

What Foods Are High in Vitamin D?

Foods rich in vitamin D include fatty fish (e.g., salmon, mackerel, and tuna), egg yolks, fortified dairy and plant-based milk, fortified cereals, and cod liver oil. Dietary supplements are another option to increase vitamin D intake.

Can I Take Vitamin D Supplements To Prevent Deficiency?

Yes, vitamin D supplements are a common way to prevent and treat deficiency, especially when it's challenging to obtain enough through sunlight and diet. Consult a healthcare provider for guidance on appropriate dosages.

How Is Vitamin D Deficiency Diagnosed?

A blood test measuring your serum 25-hydroxyvitamin D (25(OH)D) levels is used to diagnose vitamin D deficiency. Levels below 20 ng/mL are generally considered deficient.

What Is the Importance of Vitamin D in Overall Health?

Vitamin D has properties that reduce inflammation, protect against oxidative stress, and safeguard nerve cells, which are beneficial for immune function, muscle activity, and the health of brain cells. Vitamin D is an important vitamin that helps the body absorb calcium. Vitamin D deficiency can cause bone pain, fatigue, muscle weakness, joint pain, and depression.

What Are the Potential Health Risks Associated With Long-Term Vitamin D Deficiency?

Prolonged deficiency can lead to bone disorders like rickets in children and osteoporosis in adults. It may also increase the risk of certain chronic diseases, including cardiovascular disease and autoimmune conditions. Children with Vitamin D deficiency may experience delayed growth and development.

Article Sources

Our writers at Discovermagazine.com use peer-reviewed studies and high quality sources for our articles, and our editors review for scientific accuracy, and editorial standards. Review the sources used below for this article:

National Institutes of Health: Office of Dietary Supplements. Vitamin D Fact Sheet for Consumers.

Medicina. Vitamin D Deficiency: Consequence or Cause of Obesity?

Nutrients. Vitamin D in Fibromyalgia: A Causative or Confounding Biological Interplay?

Nutrients. Vitamin D in Fibromyalgia: A Causative or Confounding Biological Interplay?

North American journal of medical sciences. Correction of Low Vitamin D Improves Fatigue: Effect of Correction of Low Vitamin D in Fatigue Study (EViDiF Study)

Revista brasileira de reumatologia. The importance of vitamin D levels in autoimmune diseases

Indian journal of psychological medicine. Vitamin D and Depression: A Critical Appraisal of the Evidence and Future Directions

Current nutrition reports. Is Vitamin D Important in Anxiety or Depression? What Is the Truth?

Mayo Clinic proceedings. Vitamin D Deficiency in Adults: When to Test and How to Treat

Annals of epidemiology. National Institutes of Health. Vitamin D Fact Sheet for Health Professionals

Vitamin D Status: Measurement, Interpretation And Clinical Application

Irish Journal of Medical Science. The effect of vitamin D treatment on quality of life in patients with fibromyalgia

Best Practice and Research Clinical Endocrinology. The effect of vitamin D on bone and osteoporosis

50 Helion. Vitamin D and neurodegenerative diseases

Experimental and Therapeutic Medicine. Prevalence of serum vitamin D deficiency and insufficiency in cancer: Review of the epidemiological literature

The American Journal of the Medical School. Vitamin D Deficiency and Risk for Cardiovascular Disease

Biol Sex Differ. The role of vitamin D in autoimmune diseases: could sex make the difference?

Medicina.The Relationship between Vitamin D and Periodontal Pathology.

Indian journal of psychological medicine. Vitamin D and Depression: A Critical Appraisal of the Evidence and Future Directions

Healthcare. Impact of Vitamin D Deficiency on Mental Health in University Students: A Cross-Sectional Study

International journal of health sciences. Vitamin D Deficiency- An Ignored Epidemic

Indian journal of psychological medicine. Vitamin D and Depression: A Critical Appraisal of the Evidence and Future Directions

Mayo Clinic Proceedings. Vitamin D Deficiency in Adults: When to Test and How to Treat

Cureus. Prevalence of Vitamin D Deficiency and Associated Risk Factors in the US Population

Aging and disease. The Problems of Vitamin D Insufficiency in Older People

Frontiers in nutrition. Vitamin D Intake and Factors Associated With Self-Reported Vitamin D Deficiency Among US Adults: A 2021 Cross-Sectional Study

Annals of epidemiology. Vitamin D Status: Measurement, Interpretation, and Clinical Application