This story was originally published in our July/August 2022 issue as "Ghosts in the Machine." Click here to subscribe to read more stories like this one.

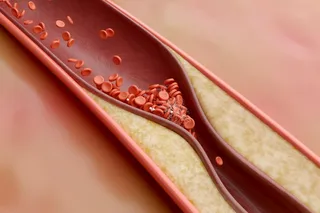

If a heart attack isn’t documented, did it really happen? For an artificial intelligence program, the answer may very well be “no.” Every year, an estimated 170,000 people in the United States experience asymptomatic — or “silent” — heart attacks. During these events, patients likely have no idea that a blockage is keeping blood from flowing or that vital tissue is dying. They won’t experience any chest pain, dizziness or trouble breathing. They don’t turn beet red or collapse. Instead, they may just feel a bit tired, or have no symptoms at all. But while the patient might not realize what happened, the underlying damage can be severe and long-lasting: People who suffer silent heart attacks are at higher risk for coronary heart disease ...