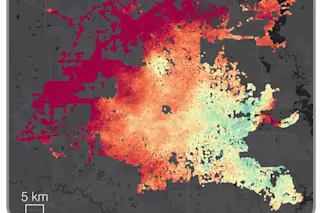

To many of us, the idea that out-of-control global warming is about to parch forests and drown small island nations seems highly implausible. Climate may be fickle, but it’s rarely that malicious.

Skeptics might do well to scroll back Earth history 800 years or so, however. England, today notorious for its dreary chill, was notable for its wines. Greenland, today an ice sheet fringed with land barely suitable for grazing sheep and reindeer, was then a fertile ground where Viking farmers tilled fields of grain; alas, several hundred years later these same Norse settlers would be driven away by the deepening cold, the last survivors struggling to bury their dead in the rising permafrost.

Turn the clock back 17,000 years more, and much of the Northern Hemisphere looks like the interior of Greenland today: a featureless desert of ice thousands of feet thick. Turn back 5 million years more, and ...