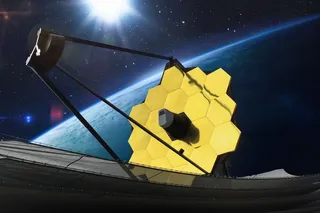

Over the past six months, we Earthlings have seen some pretty awe-inspiring images through the James Webb Space Telescope (JWST). Since the telescope's first image was revealed to the public in July, 2022, JWST has captured images of ancient galaxies, glittering nebulas and remote exoplanets.

It’s clear these pictures aren’t the work of your average point-and-shoot camera — each one is the result of an impressive array of instruments and technologies, finely tuned to bring us cosmic views so dazzling they could be mistaken for computer-generated graphics.

It all begins when light from a distant object strikes the telescope’s 21-foot-wide, gold-plated mirror, which is composed of 18 hexagonal segments. Dividing them this way made it easier for NASA scientists to launch JWST into orbit, but they needed to be calibrated with astounding precision to act as one giant mirror and maintain sharp focus.

Read More: James Webb Telescope Captures Eerie ...