As he swipes his finger over the touch screen, Joseph Quintanilla senses a subtle bumpiness. Rubbing back and forth, he feels the roughness give way to what seems like a flat glass surface. “Yeah, I can feel it getting smoother,” says Quintanilla, who is blind.

The touch pad in his hands displays a snowy, frosted window that his finger wipes smoother with every pass. It’s an effect created in part by Ali Israr, an engineer at Disney Research labs. Israr specializes in haptic engineering, which focuses on applying tactile stimulation to our interactions with computers.

The texture under Quintanilla’s finger doesn’t mimic the exact feeling of snow under fingertips — the temperature shifting, solid becoming liquid — but it does convey the feeling of a rough texture becoming smooth and even. Once Israr describes the image, Quintanilla immediately gets it. “Oh yeah, I can picture it now,” he says. “That’s very cool.”

Quintanilla, who works at the National Braille Press as its director of major gifts and planned giving, is looking for a tool that could help blind children read maps and graphs when taking standardized tests. Currently, these students use sheets of paper with raised lines to represent images — a format essentially unchanged since the 1820s and increasingly costly to print.

Quintanilla heard about Israr’s work on Disney’s TeslaTouch**, a flat screen that uses frictional forces to make users feel like they’re interacting with images on it, and he decided to check it out to consider it for grant funding.

Israr is part of a community of researchers working to make touch screens more, well, touchable. Movements with our fingers across a flat screen have come to replace pressing buttons and keys on everything from ATMs to phones, and researchers now are working on the next frontier: adding tactile feedback to help enhance the feeling that users are interacting directly with the technology. Advanced touch screens like the TeslaTouch are on the cusp of widespread use, according to Israr. “It might seem crazy now, but I bet in 10 years it will just seem like, ‘Of course that happened,’ ” he says. “It’ll just become what we expect of our devices.”

Causing a Sensation

With his thick, black hair, round cheeks and his “work uniform” of a T-shirt and hoodie, Israr appears younger than his late 30s. He always knew he would be an engineer, but until he began his doctoral work at Purdue University, he had never heard of haptics. (From the Greek word meaning “to touch.”) While there, Israr worked on a device called a tactuator — a machine that took audio recordings and translated them into movements felt by the fingers — to understand how to relay the sounds of various letters. Theoretically, it would help the blind communicate based on Helen Keller’s famed Tadoma method, which allowed her to understand speech by placing her fingers on a speaker’s lips and jaw line.

Through his research, Israr came to understand the complications of communicating different types of touch through technology — vocal vibrations, gusts from breathing and jaw movement. He set about trying to imitate the sensations with machines. His research showed that users could understand far more sounds through touch than anyone had expected, but unfortunately, once Israr graduated in 2007, no one continued work on the tactuator. It remains just an idea and a prototype to this day.

After a postdoctoral stint at a haptics lab at Rice University, Israr got a job at Disney in 2009. In the long term, haptics could find uses in amusement park rides or gaming devices. At Disney, he started working under Ivan Poupyrev, a principal research scientist whose expertise had been in sensors and product design. (Poupyrev has since left Disney to work at Google.) Early in Israr’s tenure, Poupyrev and a researcher named Olivier Bau built an early model of the haptic tablet hardware.

Poupyrev initially came up with the idea by accident the year before, when he was working as an engineer at Sony. Late one night, as he tried to assemble parts of a touch screen from a supplier while working on another product using tactile feedback, he mixed up the wiring instructions. Suddenly, when he slid his finger across the screen, he felt a rubbery sensation. At the time, Poupyrev was busy with other work, so he set aside the weird sensation for later.

When a restructuring at Sony shut down Poupyrev’s product development, he joined Disney as principal research scientist. There, he bought similar materials — a glass plate covered by a transparent conductive sheet, itself covered by a thin insulation layer — and tried to re-create the feeling.

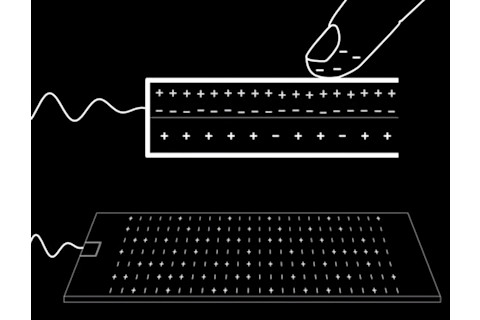

In trying to understand the phenomenon, the team discovered a paper from 1953 written by a chemist named Edward Mallinckrodt that first described the technology, dubbed electrovibration.

Mallinckrodt discovered the phenomenon when he noticed that a shiny, brass electric light socket felt smoother when it was off than it did with the light burning. After a few years of experiments trying to figure out why, he realized that the thin insulating coating shielded the finger from being shocked but still carried electric current for the finger to notice the electricity running through the surface. This attracted the finger gently toward the surface, stretching its skin. While the force was too weak to detect when the finger was still, it created tactile resistance when the finger moved across the surface.

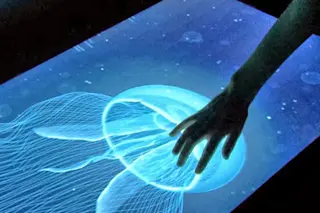

A user “feels” the jellyfish in real time on a TeslaTouch haptic screen. | Disney Research

Within weeks, Poupyrev and Bau had replicated the sensation from Poupyrev’s happy accident. They named it the TeslaTouch, after Nikola Tesla, who invented the alternating current that powered the device. Poupyrev knew they were onto something and brought on Israr, with his experience understanding people’s perceptions of haptic information, to help determine how to convert those electrical forces into meaningful experiences.

A Touching Experiment

To figure that out, Israr and the TeslaTouch team needed more data. They brought study participants into the lab to describe how it felt when Israr charged the screen with voltages of various frequencies and amplitudes. Participants were encouraged to describe the sensations using nouns: fur, silk, jeans, sandpaper. Low-frequency stimuli felt rougher, like wood and bumpy leather. High-frequency stimuli felt like smoother surfaces, such as paper and a painted wall. Generally, varying the amplitude did the same: High amplitudes felt smoother.

Combining the two produced even more sensations. A high frequency and high amplitude made the surface feel extra smooth, like a wall painted in latex. On the other hand, turning up the amplitude at a low frequency made the screen feel slightly sticky, like a motorcycle handle or soft rubber. One participant even said it felt like he was running his fingers through a viscous fluid.

The team now had a library of textures it could use to make the screen feel smooth, bumpy, rough or sticky. Next, they brainstormed tactile illusions that made use of those effects on the TeslaTouch. The bumpy feeling of a low-frequency current gave rise to a virtual computer mouse with a ridged scroll wheel. By progressively increasing amplitude at a low frequency, the researchers felt they could give the impression that users’ fingers were sticking to the screen. They projected an image of three different-size weights. The biggest, the one with the highest amplitude, produced the stickiest sensation, so the friction that it created as a user moved it across the screen gave the impression of heft.

After a heavy snowstorm one weekend, the team came up with the idea of a frosted window being wiped clean, with each stroke increasing the frequency, making the surface feel smoother to the touch — the effect Quintanilla would feel in Israr’s office.

Haptic screen technology could be a boon to the blind, as Louise Chuha, a board member of the Golden Triangle Council of the Blind in Pittsburgh, discovers for herself. | Disney Research

Over the next several years, Israr and his colleagues traveled the world, presenting the model at technology conferences to build interest. While the technology impressed scientists and businessmen, they kept asking what else it could do. Creating textures on a screen was interesting, but could it display 3-D graphics or convey different sensations to different parts of a hand, truly mimicking the way we experience touch in our day-to-day lives? Israr decided to find out.

Reaching New Peaks

As he read up on the sense of touch, Israr learned that humans perceive dips and slopes on a surface primarily through the stretching of the skin. In places where a surface is raised, they experience increased friction on their finger. The opposite naturally occurs with a dip in the surface. Israr then surmised that a higher voltage, which would create more friction, would give the user the impression that the screen was pushing up against his finger, producing a 3-D effect. With that hypothesis, he went to work trying to create the perception of a single large bump on a flat screen.

Israr again turned to research subjects, asking them to feel a touch screen powered with varying voltages. Israr projected an image that looked like a bell photographed from above and asked test subjects what type of frictional pattern best matched the feeling they’d expect when they slid a finger over it. He tried turning the voltage off when users reached the top of the bump as well as matching the voltage to their perceived height of various parts of the image.

But neither effort felt right to users; the second one made most of them feel like the surface was raised but then plateaued. In the end, the pattern of electrovibration that felt to users most like the picture matched the frictional forces to the slope of the bump: the steeper the curve, the more voltage required to increase the friction.

While several other researchers could also create textures on tablet-size devices using vibration, Israr was the first to develop an algorithm for tactile rendering of 3-D features and textures on touch surfaces. Using the same theory he used to create his raised surface, Israr wrote an algorithm that allowed users to feel any “depth map,” a computer graphic that conveys information about the distance and surface of the object depicted. It was a breakthrough for Israr and his team.

Israr thinks the next step will involve seeing if the device can provide different sensations to each finger of a user. This would allow for another level of 3-D tactile illusions: Each finger of a hand gliding across a desk would reach the corner in succession; a piano keyboard app would allow users to feel a dip in several keys while playing a chord.

“The possibilities are huge if we could transmit signals to multiple fingers,” Israr says. “Imagine what this could be used for. We could have the potential to create 3-D interfaces for the blind. We could feel clothing before we bought it online. We could transmit touch when talking on Skype.”

Haptic Ending

The potential application for a haptic screen is perhaps best shown through one of Israr’s favorite illusions on the TeslaTouch. In his office, as Quintanilla handles the tablet, he comes upon an image of a rectangle on a grid, like something out of middle-school geometry homework. Israr guides Quintanilla’s finger to the corner of the rectangle and asks him to drag it.

TeslaTouch re-creates the sensation of texture by varying the electric fields on a touch screen. As a finger slides across, the difference in charge feels like friction. | Disney Research

When Quintanilla moves his hand across the screen, the TeslaTouch provides a frictional force on his finger. The drag force is released just as the polygon’s corner reaches another vertex on the grid, making it feel as if Quintanilla is snapping the corner of the rectangle into place. “OK, I can feel it releasing,” Quintanilla says. “I just got it to a point, right?”

“I love this illusion because it shows the potential of this technology," Israr says. "We need feedback on our fingers to tell us how we’re moving across the screen. Once the feedback is in place, it becomes easier to use the screen to create.”

Quintanilla agrees. “What makes this different from other haptic devices I’ve tried out is that it’s responding to my movements,” he says. “I’m not just feeling something that’s staying in one place.”

Even though Quintanilla’s hoped-for tool for the blind may be years away, technology that could enhance how we use gadgets in unimagined ways is on its way. Right now, Israr isn’t too concerned about how the technology will end up being used. “Nobody really has a good idea yet of what it’s good for,” he says. “We want to build the interface, and we’re curious what it will be used to create.”

One of the earliest criticisms of touch screens was that our movements on them were imprecise. That’s partly because there’s no feedback to tell if our fingers are in the exact right place. If we have buttons we can feel, or textures we can sense moving, we’ll be able to use them much more easily, Israr says.

“Haptics can help make technology easier and more intuitive to use,” he says. “It can help people create.” Once people can physically feel the images they’re interacting with, there’ll be no going back.

[This article originally appeared in print as "Hooked on a Feeling."]

**Update 25 Aug 2015: The device called the TeslaTouch has been renamed the Electrovibration Haptic Device