When we’re presented with a choice, we carefully weigh the alternatives and choose the option that makes the most sense — or do we? Only recently has science begun to unravel how we really make decisions.

In the face of stress or time pressure, or even seemingly unrelated cues, our assessment of situations and the choices we ultimately make can be colored by innate biases, flawed assumptions and prejudices born of personal experience. And we’re clueless about how they influence our judgments. These unconscious processes can lead us to make decisions that, in fact, don’t really make much sense at all.

If you’re not convinced, go to a group of people and offer each person a dollar. Do this five times, each time asking if the individual wants to buy a $1 lottery ticket. Then offer $5 all at once to a second group, and ask the people how many lottery tickets they would like to buy. You’d think both groups would buy the same number of tickets — after all, they got the same amount of money.

Nope. Researchers at Carnegie Mellon found that the first group would consistently buy twice as many lottery tickets as the group that was given the same amount of money but only one chance to buy lottery tickets.

Here’s another way to see irrational, unconscious biases in action: Change your hairstyle. This experience inspired Malcolm Gladwell to write the bestseller Blink, which looked at the science of snap judgments. After he grew his hair long, his life changed “in very small but significant ways.” He got speeding tickets, was pulled out of airport security lines, and was questioned by police in a rape case, even though the prime suspect was much taller.

The lottery and hair scenarios provide real-world examples at odds with traditional theories used to predict human behavior. These scenarios tell us that decision-making can depend on perspective and unconscious stereotypes. These kinds of biases do serve an evolutionary purpose, many other researchers have found. In some cases, making snap decisions and following your gut can be an advantage, especially in high-pressure, time-sensitive situations.

The hidden, unconscious processes at work when we decide are so powerful that efforts to uncover and understand them have won at least two researchers the Nobel Prize in recent years.

Checks and Balances

Daniel Kahneman won the 2002 Nobel Prize in Economics for his widely referenced work in the area of human judgment. Kahneman and others in his field divide our decision-making process into two systems: System 1, with its nearly instantaneous impressions of people and situations; and System 2, with its rational analysis and ability to handle complexity. These two systems compete and sometimes overlap, acting as checks on each other.

SYSTEM 1

That long-haired guy looks suspicious. The stock market is going down — I better sell NOW!

This system generally offers preferences based on patterns picked up by our brains without our awareness. This is the source of both unconscious biases that may lead to bad judgments, and the insight from experts with significant experience in a specific situation.

This system “tends to be fast, non-conscious and emotionally charged,” says Michael Pratt, a professor of organizational change at Boston College.

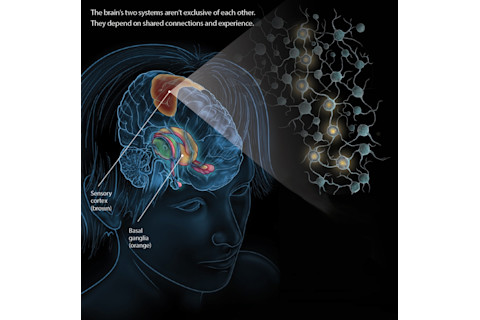

Neuroscientists often call this implicit knowledge. According to Paul Reber, a neuroscientist at Northwestern University, it is the result of connections between groups of neurons that form throughout the sensory cortex after repeated exposure to two or more stimuli together or in quick succession.

Qualities: Fast, Automatic, Associative

Advantages: It is faster and can take on an automatic quality, which makes it useful for high-pressure, high-stress situations, like combat or a basketball game. It can be harnessed and, through training, be used to speed up reaction times and save mental energy.

Disadvantage: It’s not the best system for some kinds of structured problems, such as those based on math, in which there is one correct answer. Its predictions and assumptions based on the sum of previous experiences might not represent current reality. It is vulnerable to unconscious biases.

System 1: The basal ganglia, which helps strengthen the speed of circuit formation, and the sensory cortex are major players. In the sensory cortex, groups of neurons develop patterns of firing that are re-established more easily with repeated exposure to stimuli. This is key to those split-second decisions, made under pressure. (Credit: Evan Oto/Science Source. Neurons by Jay Smith)

Evan Oto/Science Source. Neurons by Jay Smith

SYSTEM 2

That baseball player reminds me of a young David Ortiz, but appearances can be misleading. I should ignore the compulsion to sell when the stock market is going down. That is just fear talking.

These decisions are analytical, deliberate and “rational.” A dualistic notion of decision-making has been around forever. “People assume that System 1 is bad and System 2 is good,” says Pratt. ”People have been unreflective about that until recently.”

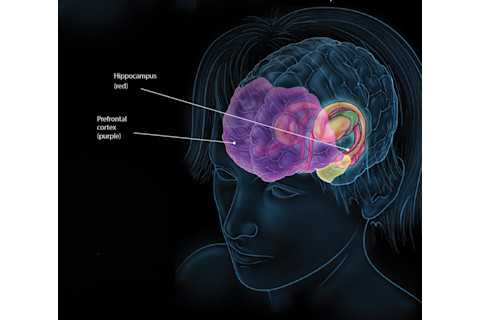

Neuroscientists have traditionally called the information we use to make these decisions explicit knowledge. It relies on the traditional memory systems of the brain, such as the hippocampus and the prefrontal cortex, the integral component of working memory.

One might liken the hippocampus to the brain’s filing clerk for long-term memories — lose it, and you become an amnesiac, incapable of retrieving memories or storing new ones. The prefrontal cortex is the seat of the brain’s executive function. That’s where we hold information we need temporarily to calculate how much to pay the babysitter or what to buy for dinner.

Qualities: Slow, Controlled, Rule-Governed

Advantages: It allows you to consider the consequences of a decision before you make it. Also, it allows the application of complex rules and information; studied, analytical thought; and analysis. And it can insulate you from the corrosive effects of fear and emotions.

Disadvantage: It’s slower and can break down under stress, causing you to “choke.”

System 2: The prefrontal cortex, which is the seat of the brain’s executive function, and the hippocampus — crucial to memory storage and recollection — work together as the foundation of explicit, rule-based decision-making. (Credit: Evan Oto/Science Source. Neurons by Jay Smith)

Evan Oto/Science Source. Neurons by Jay Smith

A Tale of Two Horses

Ancient Greece Plato compares the human will to a charioteer, driven by two horses, one representing our rational or moral impulses, and the other our irrational passions and appetites.

Late 18th century Italian criminologist and economist Cesare Beccaria publishes an essay called “On Crimes and Punishments,” which would form the basis of what’s called rational choice theory: People will act in their own best interest. Beccaria argued for the principle of deterrence in dealing with crime. He contended that punishments should be just severe enough to offset any benefit from the crime. Beccaria’s ideas would establish the foundation of modern economic theory.

1890 Psychologist William James provides what some consider the modern origins of dual process theory, speculating that there are two ways that people decide: associative and true reasoning.

1936 Management theory pioneer Chester Irving Barnard argues that mental processes fall into two distinct categories: logical (conscious thinking) and non-logical (non-reasoning). Although the categories could be melded, Barnard believed, scientists relied mainly on logical processes, and business executives on non-logical when making decisions.

1950s Herbert Simon analyzes the role of intuitive decision-making in management in a scientific way. When people make decisions, their rationality is limited by time and knowledge. Choices are, by necessity, “good enough,” Simon says.

1953 A patient known as H.M. undergoes experimental brain surgery to halt his epileptic seizures. For the first time, researchers find that decisions based on unconscious learning, or knowledge we don’t know we know, may rely on entirely different brain pathways than the parts of the brain we use when we make conscious, rational choices.

Late 1960s In a series of experiments, psychologists Daniel Kahneman and Amos Tversky demonstrate the downside of the way humans make decisions, identifying several unconscious, systemic biases that consistently distort human judgment. Kahneman would win the 2002 Nobel Prize in Economics for this work. (Tversky died in 1996.)

1979 Kahneman and Tversky introduce prospect theory, which describes how people make choices in the face of risk. Individuals are more likely to base their decisions on the potential value of losses and gains and how those gains and losses will make them feel. Scenarios that are more vivid or emotionally charged can exert more power than less emotionally charged but equally probable scenarios.

1998 Psychologist Gary Klein publishes Sources of Power, based on his earlier work in the mid-1980s. The book would form the basis for the new field of naturalistic decision-making. By studying experienced firefighters, military commanders and nurses, Klein contended that intuition was not inherently bad — it’s just less accurate when based on limited experience. He argued that experts make decisions in high-stress situations, under time pressures, with a blend of Systems 1 and 2: They notice patterns, get an intuition on how to handle it, and then use System 2 to simulate and evaluate that intuition.

Implicit Learning

In 1953, a patient named Henry Molaison underwent an experimental brain surgery that halted his seizures. But then Molaison couldn’t form new long-term memories. His misfortune made him famous.

By studying “H.M.” and other amnesiacs, neuroscientists were able to prove the role of the hippocampus and related structures in long-term memory formation. Molaison’s experiences also shed light on unconscious, or implicit, learning.

The location of the hippocampus within the brain. (Credit: Sebastian Kaulitzk/Shutterstock)

Sebastian Kaulitzk/Shutterstock

Molaison was given a battery of tests, which confirmed his complete inability to form long-term memories. But one test contradicted the other results. Given the test 10 times across three consecutive days, he traced a star on paper using a barrier and mirror. His speed increased steadily, but each day when he arrived at the lab, he had no memory of learning to trace the star.

Years later, Molaison was shown 20 line drawings of common objects and animals in a series of sessions. He eventually was able to identify the drawings even with mere fragments. An hour later, he had no memory of ever taking the test. Yet when he took it again, his scores still improved. On some level, he had retained his ability to correctly classify the fragments.

This form of learning is now known as implicit learning.

Unconscious Biases

Starting in the late 1960s, Kahneman and Tversky began a collaboration that would eventually overturn the way people thought about decision-making in medicine, economics and a wide range of other fields.

They focused on heuristics — a series of unconscious rules or biases — and how they can consistently lead us in the wrong direction.

The simplest and most powerful one is called the availability heuristic. Sometimes we grasp for the wrong answer on instinct, simply because it is easier to access and thus “feels” correct. Kahneman and Tversky designed and administered a series of questions to university students — and their answers consistently went against what one would consider rational thinking.

Students listened to recordings of lists of 39 names read aloud. Some of the names were very famous people, like Richard Nixon, and others were public figures who were less well-known. One list had 19 very famous male names and 20 less-famous female names. A second list included 20 less-famous male names and 19 very famous female names. The students were then asked whether the list of names included more men or more women. When the men in the list were more famous, a majority of participants incorrectly thought there were more men on the list, and vice versa for women. Tversky and Kahneman’s interpretation: Judgments of proportion are based on “availability.” Students could more easily connect with the names of better-known people.

High-Stakes Decisions

In the 1980s, Klein wanted to know how people made really hard decisions under extreme time pressure and uncertainty. First responders and experienced military commanders seemed to make decisions — the right decisions — under pressure all the time. How did they do it?

To get to the bottom of this phenomenon, Klein visited fire stations across the Midwest. When he started out, Klein suspected that expert commanders picked a limited range of options to choose from and then carefully weighed the pros and cons. Klein expected a rational, logical approach to unfold in every commander’s conscious mind — an approach based on System 2.

To his surprise, Klein consistently found the commanders looked at just one option. They “knew” what to do. By the time they became aware of the approach, they’d already decided. Sometimes after the best approach popped into their mind, they consciously imagined how it would play out before actually implementing it, to make sure it would work. But for the most part, their first idea was the only one they considered.

“It really shook us because we didn’t expect that,” Klein recalls. “How can you just look at one option? The answer was that they had 20 years of experience.”

Twenty years’ experience gave the firefighters the ability to do what Klein called pattern matching. The process seemed to involve complicated analysis of sensory information that occurs, somehow, entirely without their awareness. After the best approach popped into their heads, the commanders didn’t compare it with others. Instead, they just acted, their thought process akin to the muscle memory of a trained boxer.

“It was unconscious, it was intuitive, but it wasn’t magical,” Klein says. “You look at a situation and you say, ‘I know what’s going on here, I’ve seen it before, I can recognize it.’ ”