This story was originally published in our July/August 2022 issue as "A Closer Look." Click here to subscribe to read more stories like this one.

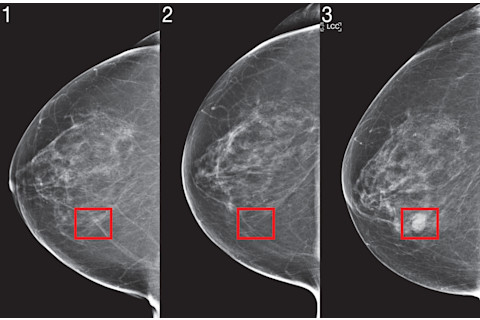

Looking at the mammogram image, with its spiderweb of faint gray lines showing dense breast tissue, you wouldn’t suspect anything was amiss. No human radiologist would hesitate to give this Massachusetts General Hospital patient a clean bill of health. But the Mirai artificial-intelligence system, created at MIT, thinks differently. When it scanned the mammogram, it flagged the patient as high risk for getting breast cancer in the next five years. Ultimately, the machine’s hunch proved correct: the patient indeed developed breast cancer, just four years after the image was taken.

Since about 90 percent of people who develop breast cancer don’t have a known genetic mutation, the disease’s emergence can be highly unpredictable. Regina Barzilay, an MIT computer scientist now working on Mirai, was blindsided when she got her own breast cancer diagnosis back in 2014. “It came to me as the biggest surprise,” she says, as no one in her family had ever had the disease. Barzilay’s firsthand experience drove her to help forecast the emergence of breast cancer in other people: Along with MIT graduate student Adam Yala, she decided to draw on her AI expertise, creating an image-scanning program that would alert doctors to impending tumors before they appeared.

Read More About AI in Medicine:

Mirai joins a pack of existing AI systems that can detect or predict very-early-stage disease. Trained on thousands of images from people in different stages of illness, these systems excel at flagging disease signs based on patterns sometimes invisible to the naked eye. In addition to Mirai, researchers have developed algorithms that can predict the risk of lung cancer on CT scans, assess future heart disease risk from MRI images and predict which suspicious skin spots will become cancerous.

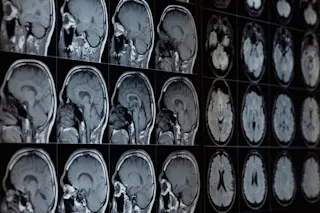

But forecasts that AI systems will replace doctors are greatly exaggerated. “It’s not as if people will walk into an MRI scanner and a computer will generate a report,” says Deepak L. Bhatt, an interventional cardiologist and Harvard Medical School professor. “There’ll still be a physician that takes a look at the image and interprets it in the clinical context.” In fact, most doctors actually welcome the prospect of an AI assist as they decide how best to treat each patient.

It may still be years before the newest AI systems are fully deployed; before they enter clinics and hospitals, they will need to go through further testing and clinical trials to prove their mettle. And once they do, doctors will need to assume broader oversight in the diagnostic process, seamlessly blending AI insights with their own clinical know-how. But assuming these AI systems do pass through the approval gauntlet, they could help physicians shoulder the workload of scanning each image for signs of life-threatening disease — and open up possibilities for earlier, more targeted treatment.

The Mirai AI system was able to identify a patient as high riskfour years before they were diagnosed with cancer. The images above were taken on the first (1), second (2) and third (3) year prior to the diagnosis. (Credit: MIT Computer Science and Artificial Intelligence Laboratory)

MIT Computer Science and Artificial Intelligence Laboratory

Diagnosis and Data

Part of what earns Mirai and its peers the “intelligent” moniker is that, like humans, these AI systems are capable of learning based on new information. Researchers train them by showing them a large cache of scans or images, like the 200,000-plus mammograms the Mirai team fed into the computer. The researchers also supply the computer with descriptive information that was up to date when each image was taken, such as whether the image contained cancer or not.

From this avalanche of data, the systems learn which distinct image features — say, near-imperceptible blemishes cloaked by dense breast tissue in a mammogram — denote disease or predict its emergence. “The model [is] looking only at the image, but looking at it in a sophisticated fashion, learning to use all the subtle patterns that are there,” Yala says.

Doctors already use some basic computing tools to predict who will develop certain diseases. The Tyrer-Cuzick modeling software, for example, calculatespatients’ future breast cancer risk based on health history factors like whether they took hormone-replacement therapy or had periods before age 12. But the newest AI systems up the ante by analyzing images to extract more precise prognostic data. This past year, the MIT team showed that Mirai lived up to its billing as a cancer prediction engine. The system flagged more than 40 percent of patients who would develop breast cancer within five years as “high risk.” That’s almost twice as many as the Tyrer-Cuzick model based on personal health data, which only labeled 23 percent of patients who later got breast cancer as high risk.

Other new AI algorithms are equally adept at picking up different kinds of malignancy risk. One AI system performed as well as human radiologists in predicting whether early lung nodules on CT scans would turn into cancer, according to a study published in the journal Radiology last May. Meanwhile, in early 2021, an MIT system posted a 90 percent accuracy rate in detecting skin lesions with future melanoma potential. The applications of AI image analysis also go beyond cancer detection: Between 2016 and 2018, a British AI system that analyzed cardiac magnetic resonance scans — which depict blood flow through a patient’s heart — proved adept at predicting which patients would later experience a major adverse cardiovascular event, such as a stroke or even death.

Since algorithms can detect patterns humans can’t, therefore expediting a doctor’s assessment, AI systems like these could help enable broader national screening programs for lung cancer or heart disease. “It could be a great equalizer for health care,” says University of Virginia urologist Kirsten Greene. “People without access to a top-20 medical center — it won’t matter, because technology will at least try to level the playing field.”

Future Doctors

But that kind of seismic change won’t happen right away. To integrate these systems’ insights into the way they work, specialists will need a keen understanding of the technology’s strengths and weaknesses. AI systems are very good at tackling what doctors call “bread-and-butter” cases, says Google AI specialist Daniel Tse. In other words, they swiftly spot the most common signs of disease on scans, because they see so many of these cases when they are being trained.

Where AI tends to fall short, however, is in parsing out-of-the-ordinary image or scan results; systems may miss these more often, as they have typically had less exposure to them during the training process. And some researchers fear new AI models’ focus on minute signs of disease could cause an epidemic of overdiagnosis, leading to unnecessary biopsies and surgeries.

For reasons like these, AI systems can’t serve as the “robot doctors” some may have envisioned, neatly filling the roles of human specialists. “At this point, no one credible is talking about those things,” Bhatt says. “What they’re really talking about is AI augmenting what physicians already do, increasing their accuracy.” What’s likely to happen in the near term, Bhatt says, is that specialists will assume more of a managerial role in diagnosis and planning, interpreting the data in front of them (including sophisticated AI analysis) in ways that suit each case.

If an AI algorithm flags a patient as high risk for breast cancer, for instance, her physician will develop a treatment plan with that in mind. But the physician will also weigh an array of additional factors, like the patient’s prior cancer history and physical tolerance for aggressive treatment. On top of that, doctors using AI image analysis will find themselves confronting thorny ethical questions: If a mammogram shows a patient is highly likely to get breast cancer in the next five years, should she get preemptive chemotherapy or even a mastectomy without a definite diagnosis?

Situations like this demand the kinds of complex judgment calls no automated system can hope to make. For now, the biggest story in AI isn’t how image analysis is making doctors obsolete. It’s how it’s adding critical tools to specialists’ arsenals, making their judgment better — and more on-target — than before.