Every day, we conduct science experiments, posing an “if” with a “then” and seeing what shakes out. Maybe it’s just taking a slightly different route on our commute home or heating that burrito for a few seconds longer in the microwave. Or it could be trying one more variation of that gene, or wondering what kind of code would best fit a given problem. Ultimately, this striving, questioning spirit is at the root of our ability to discover anything at all. A willingness to experiment has helped us delve deeper into the nature of reality through the pursuit we call science.

A select batch of these science experiments has stood the test of time in showcasing our species at its inquiring, intelligent best. Whether elegant or crude, and often with a touch of serendipity, these singular efforts have delivered insights that changed our view of ourselves or the universe.

Here are nine such successful endeavors — plus a glorious failure — that could be hailed as the top science experiments of all time.

Eratosthenes Measures the World

Experimental result: The first recorded measurement of Earth’s circumference

When: end of the third century B.C.

Just how big is our world? Of the many answers from ancient cultures, a stunningly accurate value calculated by Eratosthenes has echoed down the ages. Born around 276 B.C. in Cyrene, a Greek settlement on the coast of modern-day Libya, Eratosthenes became a voracious scholar — a trait that brought him both critics and admirers. The haters nicknamed him Beta, after the second letter of the Greek alphabet. University of Puget Sound physics professor James Evans explains the Classical-style burn: “Eratosthenes moved so often from one field to another that his contemporaries thought of him as only second-best in each of them.” Those who instead celebrated the multitalented Eratosthenes dubbed him Pentathlos, after the five-event athletic competition.

That mental dexterity landed the scholar a gig as chief librarian at the famous library in Alexandria, Egypt. It was there that he conducted his famous experiment. He had heard of a well in Syene, a Nile River city to the south (modern-day Aswan), where the noon sun shone straight down, casting no shadows, on the date of the Northern Hemisphere’s summer solstice. Intrigued, Eratosthenes measured the shadow cast by a vertical stick in Alexandria on this same day and time. He determined the angle of the sun’s light there to be 7.2 degrees, or 1/50th of a circle’s 360 degrees.

Knowing — as many educated Greeks did — Earth was spherical, Eratosthenes fathomed that if he knew the distance between the two cities, he could multiply that figure by 50 and gauge Earth’s curvature, and hence its total circumference. Supplied with that information, Eratosthenes deduced Earth’s circumference as 250,000 stades, a Hellenistic unit of length equaling roughly 600 feet. The span equates to about 28,500 miles, well within the ballpark of the correct figure of 24,900 miles.

Eratosthenes’ motive for getting Earth’s size right was his keenness for geography, a field whose name he coined. Fittingly, modernity has bestowed upon him one more nickname: father of geography. Not bad for a guy once dismissed as second-rate.

William Harvey Takes the Pulse of Nature

Experimental result: The discovery of blood circulation

When: Theory published in 1628

Boy, was Galen wrong.

The Greek physician-cum-philosopher proposed a model of blood flow in the second century that, despite being full of whoppers, prevailed for nearly 1,500 years. Among its claims: The liver constantly makes new blood from food we eat; blood flows throughout the body in two separate streams, one infused (via the lungs) with “vital spirits” from air; and the blood that tissues soak up never returns to the heart.

Overturning all this dogma took a series of often gruesome experiments.

High-born in England in 1578, William Harvey rose to become royal physician to King James I, affording him the time and means to pursue his greatest interest: anatomy. He first hacked away (literally, in some cases) at the Galenic model by exsanguinating — draining the blood from — test critters, including sheep and pigs. Harvey realized that if Galen were right, an impossible volume of blood, exceeding the animals’ size, would have to pump through the heart every hour.

To drive this point home, Harvey sliced open live animals in public, demonstrating their puny blood supplies. He also constricted blood flow into a snake’s exposed heart by finger-pinching a main vein. The heart shrunk and paled; when pierced, it poured forth little blood. By contrast, choking off the main exiting artery swelled the heart. Through studies of the slow heart beats of reptiles and animals near death, he discerned the heart’s contractions, and deduced that it pumped blood through the body in a circuit.

According to Andrew Gregory, a professor of history and philosophy of science at University College London, this was no easy deduction on Harvey’s part. “If you look at a heart beating normally in its normal surroundings, it is very difficult to work out what is actually happening,” he says.

Experiments with willing people, which involved temporarily blocking blood flow in and out of limbs, further bore out Harvey’s revolutionary conception of blood circulation. He published the full theory in a 1628 book, De Motu Cordis [The Motion of the Heart]. His evidence-based approach transformed medical science, and he’s recognized today as the father of modern medicine and physiology.

Gregor Mendel Cultivates Genetics

Experimental result: The fundamental rules of genetic inheritance

When: 1855-1863

A child, to varying degrees, resembles a parent, whether it’s a passing resemblance or a full-blown mini-me. Why?

The profound mystery behind the inheritance of physical traits began to unravel a century and a half ago, thanks to Gregor Mendel. Born in 1822 in what is now the Czech Republic, Mendel showed a knack for the physical sciences, though his farming family had little money for formal education. Following the advice of a professor, he joined the Augustinian order, a monastic group that emphasized research and learning, in 1843.

Ensconced at a monastery in Brno, the shy Gregor quickly began spending time in the garden. Fuchsias in particular grabbed his attention, their daintiness hinting at an underlying grand design. “The fuchsias probably gave him the idea for the famous experiments,” says Sander Gliboff, who researches the history of biology at Indiana University Bloomington. “He had been crossing different varieties, trying to get new colors or combinations of colors, and he got repeatable results that suggested some law of heredity at work.”

These laws became clear with his cultivation of pea plants. Using paintbrushes, Mendel dabbed pollen from one to another, precisely pairing thousands of plants with certain traits over a stretch of about seven years. He meticulously documented how matching yellow peas and green peas, for instance, always yielded a yellow plant. Yet mating these yellow offspring together produced a generation where a quarter of the peas gleamed green again. Ratios like these led to Mendel’s coining of the terms dominant (the yellow color, in this case) and recessive for what we now call genes, and which Mendel referred to as “factors.”

He was ahead of his time. His studies received scant attention in their day, but decades later, when other scientists discovered and replicated Mendel’s experiments, they came to be regarded as a breakthrough.

“The genius in Mendel’s experiments was his way of formulating simple hypotheses that explain a few things very well, instead of tackling all the complexities of heredity at once,” says Gliboff. “His brilliance was in putting it all together into a project that he could actually do.”

Isaac Newton Eyes Optics

Experimental result: The nature of color and light

When: 1665-1666

Before he was that Isaac Newton — scientist extraordinaire and inventor of the laws of motion, calculus and universal gravitation (plus a crimefighter to boot) — plain ol’ Isaac found himself with time to kill. To escape a devastating outbreak of plague in his college town of Cambridge, Newton holed up at his boyhood home in the English countryside. There, he tinkered with a prism he picked up at a local fair — a “child’s plaything,” according to Patricia Fara, fellow of Clare College, Cambridge.

Let sunlight pass through a prism and a rainbow, or spectrum, of colors splays out. In Newton’s time, prevailing thinking held that light takes on the color from the medium it transits, like sunlight through stained glass. Unconvinced, Newton set up a prism experiment that proved color is instead an inherent property of light itself. This revolutionary insight established the field of optics, fundamental to modern science and technology.

Newton deftly executed the delicate experiment: He bored a hole in a window shutter, allowing a single beam of sunlight to pass through two prisms. By blocking some of the resulting colors from reaching the second prism, Newton showed that different colors refracted, or bent, differently through a prism. He then singled out a color from the first prism and passed it alone through the second prism; when the color came out unchanged, it proved the prism didn’t affect the color of the ray. The medium did not matter. Color was tied up, somehow, with light itself.

Partly owing to the ad hoc, homemade nature of Newton’s experimental setup, plus his incomplete descriptions in a seminal 1672 paper, his contemporaries initially struggled to replicate the results. “It’s a really, really technically difficult experiment to carry out,” says Fara. “But once you have seen it, it’s incredibly convincing.”

In making his name, Newton certainly displayed a flair for experimentation, occasionally delving into the self-as-subject variety. One time, he stared at the sun so long he nearly went blind. Another, he wormed a long, thick needle under his eyelid, pressing on the back of his eyeball to gauge how it affected his vision. Although he had plenty of misses in his career — forays into occultism, dabbling in biblical numerology — Newton’s hits ensured his lasting fame.

Michelson and Morley Whiff on Ether

Experimental result: The way light moves

When: 1887

Say “hey!” and the sound waves travel through a medium (air) to reach your listener’s ears. Ocean waves, too, move through their own medium: water. Light waves are a special case, however. In a vacuum, with all media such as air and water removed, light somehow still gets from here to there. How can that be?

The answer, according to the physics en vogue in the late 19th century, was an invisible, ubiquitous medium delightfully dubbed the “luminiferous ether.” Working together at what is now Case Western Reserve University in Ohio, Albert Michelson and Edward W. Morley set out to prove this ether’s existence. What followed is arguably the most famous failed experiment in history.

The scientists’ hypothesis was thus: As Earth orbits the sun, it constantly plows through ether, generating an ether wind. When the path of a light beam travels in the same direction as the wind, the light should move a bit faster compared with sailing against the wind.

To measure the effect, miniscule though it would have to be, Michelson had just the thing. In the early 1880s, he had invented a type of interferometer, an instrument that brings sources of light together to create an interference pattern, like when ripples on a pond intermingle. A Michelson interferometer beams light through a one-way mirror. The light splits in two, and the resulting beams travel at right angles to each other. After some distance, they reflect off mirrors back toward a central meeting point. If the light beams arrive at different times, due to some sort of unequal displacement during their journeys (say, from the ether wind), they create a distinctive interference pattern.

The researchers protected their delicate interferometer setup from vibrations by placing it atop a solid sandstone slab, floating almost friction-free in a trough of mercury and further isolated in a campus building’s basement. Michelson and Morley slowly rotated the slab, expecting to see interference patterns as the light beams synced in and out with the ether’s direction.

Instead, nothing. Light’s speed did not vary.

Neither researcher fully grasped the significance of their null result. Chalking it up to experimental error, they moved on to other projects. (Fruitfully so: In 1907, Michelson became the first American to win a Nobel Prize, for optical instrument-based investigations.) But the huge dent Michelson and Morley unintentionally kicked into ether theory set off a chain of further experimentation and theorizing that led to Albert Einstein’s 1905 breakthrough new paradigm of light, special relativity.

(Credit: Mark Marturello)

Mark Marturello

Marie Curie’s Work Matters

Experimental result: Defining radioactivity

When: 1898

Few women are represented in the annals of legendary scientific experiments, reflecting their historical exclusion from the discipline. Marie Sklodowska broke this mold.

Born in 1867 in Warsaw, she immigrated to Paris at age 24 for the chance to further study math and physics. There, she met and married physicist Pierre Curie, a close intellectual partner who helped her revolutionary ideas gain a foothold within the male-dominated field. “If it wasn’t for Pierre, Marie would never have been accepted by the scientific community,” says Marilyn B. Ogilvie, professor emeritus in the history of science at the University of Oklahoma. “Nonetheless, the basic hypotheses — those that guided the future course of investigation into the nature of radioactivity — were hers.”

The Curies worked together mostly out of a converted shed on the college campus where Pierre worked. For her doctoral thesis in 1897, Marie began investigating a newfangled kind of radiation, similar to X-rays and discovered just a year earlier. Using an instrument called an electrometer, built by Pierre and his brother, Marie measured the mysterious rays emitted by thorium and uranium. Regardless of the elements’ mineralogical makeup — a yellow crystal or a black powder, in uranium’s case — radiation rates depended solely on the amount of the element present.

From this observation, Marie deduced that the emission of radiation had nothing to do with a substance’s molecular arrangements. Instead, radioactivity — a term she coined — was an inherent property of individual atoms, emanating from their internal structure. Up until this point, scientists had thought atoms elementary, indivisible entities. Marie had cracked the door open to understanding matter at a more fundamental, subatomic level.

Curie was the first woman to win a Nobel Prize, in 1903, and one of a very select few people to earn a second Nobel, in 1911 (for her later discoveries of the elements radium and polonium).

“In her life and work,” says Ogilvie, “she became a role model for young women who wanted a career in science.”

(Credit: Mark Marturello)

Mark Marturello

Ivan Pavlov Salivates at the Idea

Experimental result: The discovery of conditioned reflexes

When: 1890s-1900s

Russian physiologist Ivan Pavlov scooped up a Nobel Prize in 1904 for his work with dogs, investigating how saliva and stomach juices digest food. While his scientific legacy will always be tied to doggie drool, it is the operations of the mind — canine, human and otherwise — for which Pavlov remains celebrated today.

Gauging gastric secretions was no picnic. Pavlov and his students collected the fluids that canine digestive organs produced, with a tube suspended from some pooches’ mouths to capture saliva. Come feeding time, the researchers began noticing that dogs who were experienced in the trials would start drooling into the tubes before they’d even tasted a morsel. Like numerous other bodily functions, the generation of saliva was considered a reflex at the time, an unconscious action only occurring in the presence of food. But Pavlov’s dogs had learned to associate the appearance of an experimenter with meals, meaning the canines’ experience had conditioned their physical responses.

“Up until Pavlov’s work, reflexes were considered fixed or hardwired and not changeable,” says Catharine Rankin, a psychology professor at the University of British Columbia and president of the Pavlovian Society. “His work showed that they could change as a result of experience.”

Pavlov and his team then taught the dogs to associate food with neutral stimuli as varied as buzzers, metronomes, rotating objects, black squares, whistles, lamp flashes and electric shocks. Pavlov never did ring a bell, however; credit an early mistranslation of the Russian word for buzzer for that enduring myth.

The findings formed the basis for the concept of classical, or Pavlovian, conditioning. It extends to essentially any learning about stimuli, even if reflexive responses are not involved. “Pavlovian conditioning is happening to us all of the time,” says W. Jeffrey Wilson of Albion College, fellow officer of the Pavlovian Society. “Our brains are constantly connecting things we experience together.” In fact, trying to “un-wire” these conditioned responses is the strategy behind modern treatments for post-traumatic stress disorder, as well as addiction.

(Credit: Mark Marturello)

Mark Marturello

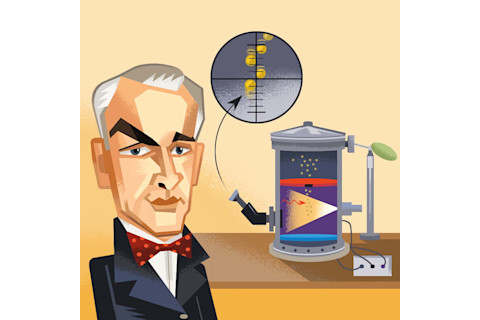

Robert Millikan Gets a Charge

Experimental result: The precise value of a single electron’s charge

When: 1909

By most measures, Robert Millikan had done well for himself. Born in 1868 in a small town in Illinois, he went on to earn degrees from Oberlin College and Columbia University. He studied physics with European luminaries in Germany. He then joined the University of Chicago’s physics department, and even penned some successful textbooks.

But his colleagues were doing far more. The turn of the 20th century was a heady time for physics: In the span of just over a decade, the world was introduced to quantum physics, special relativity and the electron — the first evidence that atoms had divisible parts. By 1908, Millikan found himself pushing 40 without a significant discovery to his name.

The electron, though, offered an opportunity. Researchers had struggled with whether the particle represented a fundamental unit of electric charge, the same in all cases. It was a critical determination for further developing particle physics. With nothing to lose, Millikan gave it a go.

In his lab at the University of Chicago, he began working with containers of thick water vapor, called cloud chambers, and varying the strength of an electric field within them. Clouds of water droplets formed around charged atoms and molecules before descending due to gravity. By adjusting the strength of the electric field, he could slow down or even halt a single droplet’s fall, countering gravity with electricity. Find the precise strength where they balanced, and — assuming it did so consistently — that would reveal the charge’s value.

When it turned out water evaporated too quickly, Millikan and his students — the often-unsung heroes of science — switched to a longer-lasting substance: oil, sprayed into the chamber by a drugstore perfume atomizer.

The increasingly sophisticated oil-drop experiments eventually determined that the electron did indeed represent a unit of charge. They estimated its value to within whiskers of the currently accepted charge of one electron (1.602 x 10-19 coulombs). It was a coup for particle physics, as well as Millikan.

“There’s no question that it was a brilliant experiment,” says Caltech physicist David Goodstein. “Millikan’s result proved beyond reasonable doubt that the electron existed and was quantized with a definite charge. All of the discoveries of particle physics follow from that.”

Young, Davisson and Germer See Particles Do the Wave

Experimental result: The wavelike nature of light and electrons

When: 1801 and 1927, respectively

Light: particle or wave? Having long wrestled with this seeming either/or, many physicists settled on particle after Isaac Newton’s tour de force through optics. But a rudimentary, yet powerful, demonstration by fellow Englishman Thomas Young shattered this convention.

Young’s interests covered everything from Egyptology (he helped decode the Rosetta Stone) to medicine and optics. To probe light’s essence, Young devised an experiment in 1801. He cut two thin slits into an opaque object, let sunlight stream through them and watched how the beams cast a series of bright and dark fringes on a screen beyond. Young reasoned that this pattern emerged from light wavily spreading outward, like ripples across a pond, with crests and troughs from different light waves amplifying and canceling each other.

Although contemporary physicists initially rebuffed Young’s findings, rampant rerunning of these so-called double-slit experiments established that the particles of light really do move like waves. “Double-slit experiments have become so compelling [because] they are relatively easy to conduct,” says David Kaiser, a professor of physics and of the history of science at MIT. “There is an unusually large ratio, in this case, between the relative simplicity and accessibility of the experimental design and the deep conceptual significance of the results.”

More than a century later, a related experiment by Clinton Davisson and Lester Germer showed the depth of this significance. At what is now called Nokia Bell Labs in New Jersey, the physicists ricocheted electron particles off a nickel crystal. The scattered electrons interacted to produce a pattern only possible if the particles also acted like waves. Subsequent double slit-style experiments with electrons proved that particles with matter and undulating energy (light) can each act like both particles and waves. The paradoxical idea lies at the heart of quantum physics, which at the time was just beginning to explain the behavior of matter at a fundamental level.

“What these experiments show, at their root, is that the stuff of the world, be it radiation or seemingly solid matter, has some irreducible, unavoidable wavelike characteristics,” says Kaiser. “No matter how surprising or counterintuitive that may seem, physicists must take that essential ‘waviness’ into account.”

Robert Paine Stresses Starfish

Experimental result: The disproportionate impact of keystone species on ecosystems

When: Initially presented in a 1966 paper

Just like the purple starfish he crowbarred off rocks and chucked into the Pacific Ocean, Bob Paine threw conventional wisdom right out the window.

By the 1960s, ecologists had come to agree that habitats thrived primarily through diversity. The common practice of observing these interacting webs of creatures great and small suggested as much. Paine took a different approach.

Curious what would happen if he intervened in an environment, Paine ran his starfish-banishing experiments in tidal pools along and off the rugged coast of Washington state. The removal of this single species, it turned out, could destabilize a whole ecosystem. Unchecked, the starfish’s barnacle prey went wild — only to then be devoured by marauding mussels. These shellfish, in turn, started crowding out the limpets and algal species. The eventual result: a food web in tatters, with only mussel-dominated pools left behind.

Paine dubbed the starfish a keystone species, after the necessary center stone that locks an arch into place. A revelatory concept, it meant that all species do not contribute equally in a given ecosystem. Paine’s discovery had a major influence on conservation, overturning the practice of narrowly preserving an individual species for the sake of it, versus an ecosystem-based management strategy.

“His influence was absolutely transformative,” says Oregon State University’s Jane Lubchenco, a marine ecologist. She and her husband, fellow OSU professor Bruce Menge, met 50 years ago as graduate students in Paine’s lab at the University of Washington. Lubchenco, the administrator of the National Oceanic Atmospheric Administration from 2009 to 2013, saw over the years the impact that Paine’s keystone species concept had on policies related to fisheries management.

Lubchenco and Menge credit Paine’s inquisitiveness and dogged personality for changing their field. “A thing that made him so charismatic was almost a childlike enthusiasm for ideas,” says Menge. “Curiosity drove him to start the experiment, and then he got these spectacular results.”

Paine died in 2016. His later work had begun exploring the profound implications of humans as a hyper-keystone species, altering the global ecosystem through climate change and unchecked predation.

Adam Hadhazy is based in New Jersey. His work has also appeared in New Scientist and Popular Science, among other publications. This story originally appeared in print as "10 Experiments That Changed Everything"