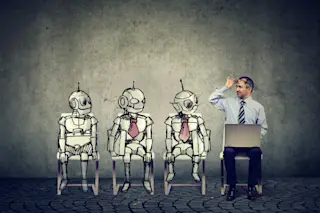

By early 2023, large language models (LLMs) were taking the world by storm. Arguably, ChatGPT led the revolution. The interactive chatbot allows users to make comments, ask questions, make requests, or enter into dialogue with the computer program. It is a kind of generative AI, which means that after training on enormous stores of data, it can produce something new and reads fairly convincingly — and eerily — as though it were created by a human.

Despite its ability to mimic human verbiage, ChatGPT was trained to do a straightforward job: use probability and training data to predict the next text that follows a sequence of words. That ability could make it useful for people who work with text, says computer scientist Mark Finlayson of Florida International University. “It’s very good at generating generic, middle school-level English, and that’s a good starting point for 80 percent of what people write ...