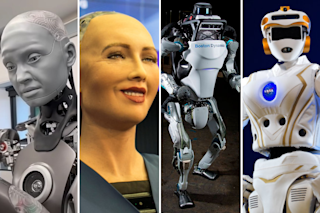

Horatio "Doc" Beardsley sits in a small, windowless room in the Entertainment Technology Center at Pittsburgh's Carnegie Mellon University, chatting away while he awaits a minor checkup. In a slightly blustery voice, he discusses his life experiences, describes his inventions, and answers questions, all with a corny sense of humor. "How old are you?" I ask. "I'm somewhere between dentures and death. More toward the death side," he answers.

With his big blue eyes, bushy gray beard and mustache, and creaky conversational style, he looks and sounds like an eccentric old scientist—exactly as his creators intended.Doc is a fake, a robot programmed to respond to spoken keywords with canned lines. He will start talking spontaneously after 6.5 seconds of silence, feign forgetfulness if he cannot match input to output, and generally bluff his way through the art of conversation.

Not long ago, computer scientists aspired to create silicon brains that ...