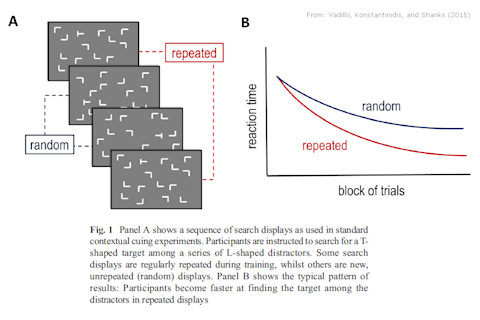

Can we learn without being aware of what we're learning? Many psychologists say that 'unconscious', or implicit, learning exists. But in a new paper, London-based psychologists Vadillo, Konstantinidis, and Shanks call the evidence for this into question. Vadillo et al. focus on one particular example of implicit learning, the contextual cueing paradigm. This involves a series of stimulus patterns, each consisting of a number of "L" shapes and one "T" shape in various orientations. For each pattern, participants are asked to find the "T" as quickly as possible. Some of the stimulus patterns are repeated more than once. It turns out that people perform better when the pattern is one that they've already seen. Thus, they must be learning something about each pattern.

What's more, this learning effect is generally seen as being unconscious because participants typically cannot consciously remember which patterns they've actually seen. As Vadillo et al. explain

Usually, the implicitness of this learning is assessed by means of a recognition test conducted at the end of the experiment. Participants are shown all the repeating patterns intermixed with new random patterns and are asked to report whether they have already seen each of those patterns. The learning effect... is considered implicit if... participants’ performance is at chance (50% correct) overall.

In most studies using the contextual cueing paradigm, the learning effect is statistically significant (p < 0.05), but the conscious recognition effect is not significant (p > 0.05). Case closed? Not so fast, so Vadillo et al. The problem, essentially, is that the lack of recognition might be a false negative result. As they put it

Null results in null hypothesis significance testing are inherently ambiguous. They can mean either that the null hypothesis is true or that there is insufficient evidence to reject it.

In contextual cueing and other unconscious learning paradigms, a negative (null) result forms a key part of the claimed phenomenon. Unconscious learning relies on positive evidence for learning and negative evidence for awareness. Vadillo et al. say that the problem is that negative results are

Surprisingly easy to obtain by mere statistical artefacts. Simply using a small sample or a noisy measure can suffice to produce a false negative... these problems might be obscuring our view of implicit learning and memory in particular and, perhaps, implicit processing in general

They reviewed published studies on the contextual cueing effect. A large majority (78.5%) reported no significant evidence of conscious awareness. But, pooling the data across all studies, there was a highly significant effect, with a Cohen's dz = 0.31, which is small, but not negligible. Essentially, this suggests that the reason why only 21.5% of the studies detected a significant recognition effect, is that the studies just didn't have a large enough sample size to reliably detect it. Vadillo et al. show that the median sample size in these studies was 16, so the statistical power to detect an effect of dz = 0.31 with that sample size is just 21% - which, of course, is exactly the proportion that did detect one. It seems therefore that people do have at least a degree of recognition of the stimuli in a contextual cueing experiment. Whether this means the learning is conscious as opposed to unconscious is not clear, but it does raise that possibility. Vadillo et al. emphasize that they're not accusing researchers of using small sample sizes "in a deliberate attempt to deceive their readers". Rather, they say, the problem is probably that researchers are just going along with the rest of the field, which has collectively adopted certain practices as 'standard'. This is actually a decades-old debate. For instance, over 20 years ago, the senior author of this paper, David Shanks, wrote (Shanks and St John, 1994) reviewed the evidence for implicit learning in several psychological paradigms concluding that "unconscious learning has not been satisfactorily established in any of these areas." I would say that in general, there is an asymmetry in how we conventionally deal with data. We hold positive results to higher standards than negative ones (i.e. we require a positive result to be <5% likely under the null hypothesis, but we don't require a negative result to have >95% statistical power.) This asymmetry generally ensures that we are conservative in accepting claims. But it has the opposite effect when a negative result is itself part of the claim - as in this case.

Vadillo MA, Konstantinidis E, & Shanks DR (2015). Underpowered samples, false negatives, and unconscious learning. Psychonomic Bulletin & Review PMID: 26122896