In the earliest days of medicine, people needed a trip to the doctor like a hole in the head. Because that’s exactly what they got: Healers and witch doctors were downright wanton in their use of trepanning — the practice of sharpening a stone to cut away a section of skull in fully conscious patients. Trepanning was done to relieve headaches, remove fractured skull fragments, provide spirits with an easy entrance or escape, sometimes just to provide rondelles — the leftover bony disks valued as charms or talismans.

At 7,000 years old, the stone trephine is considered the earliest surgical tool, but it’s not its antiquity that makes it important; it’s how the concept has remained relevant from the Neolithic to the now. Modern neurosurgeons don’t dangle rondelles around their necks, but they do still remove sections of skull.

The procedure, now called a craniotomy, is used to relieve pressure on a swelling brain, or grant access to a stroke victim’s hemorrhaging blood vessel, among others.

Although the trephine was the first tool to transform medicine, it was far from the last. Here, in no particular order, are 14 others carrying on in that heady tradition.

Hypodermic Needles

Hypodermic needles (Credit: Skryl Sergey/Shutterstock)

Skryl Sergey/Shutterstock

Physicians have wanted direct access to the bloodstream for a very long time. In 1492, as Columbus sailed the ocean blue, Pope Innocent VIII was on very a different path: He had an apoplectic stroke that year that left him in a coma. In an attempt to resuscitate him with an infusion of fresh blood, his physician cut open the veins of three healthy boys, then those of the pontiff, and stitched them all together. All four died from his macramé transfusion method.

Over the following centuries, various other attempts at intravenous infusion — including one involving a quill and pig’s bladder — met with similar results. It wasn’t until 1844 that an Irish physician named Francis Rynd developed a hollow needle fine enough to both pierce skin and administer or extract fluids. By 1853, other doctors had added glass, a steel tube and a plunger to Rynd’s needle to create essentially the same hypodermic syringe we know today.

Absolutely everything in medicine changed. In a flash, needles outpaced all bartenders ever born for providing therapeutic shots. Today, they’re used for about 20 billion injections each year of such essential compounds as antibiotics, morphine, vaccines, anesthetics, anti-coagulants and insulin. And that’s just the legal stuff.

Maggots

There’s nothing in the tool rule book that says medical instruments can’t be alive. The Maya and Australian Aboriginals were among the first to realize this. They tightly tamped wounds with maggots, knowing the off-white wigglers would feast on infected and necrotized tissue. In more recent history, doctors in the Civil War, World War I and World War II all used maggots to treat infected wounds.

But the larval marvels haven’t been consigned to the history books: Today, more than 800 U.S. health care centers have used medical maggots on patients. The immature flies are creating buzz as an important option for treating open wounds that won’t heal, caused by methicillin-resistant Staphylococcus aureus (the MRSA superbug), diabetic foot ulcers, flesh-eating disease and others.

Stethoscopes

Rene Laennec, a French physician, learned to tap on a patient’s chest to diagnose pulmonary and cardiac conditions from none other than Napoleon Bonaparte’s personal physician. But, like talking through a tin can telephone, it had auditory limitations. So Laennec took a more direct route: putting his ear directly on a patient’s chest to learn the difference between normal and abnormal heart and lung sounds.

This, too, had its drawbacks. It was difficult to hear clearly through the chests of his chubbier patients. Also, the social mores of the early 1800s made his head’s presence on the chest of a female patient awkward at best, highly objectionable at worst. To better deal with the plump and the priggish, he fashioned the first crude stethoscope in 1816. It was so quickly embraced and improved upon that, by the 1850s, its ubiquitous presence around doctors’ necks made it the emblem of medical authority.

There’s good reason they’re kept so immediately at hand: Stethoscopes are used constantly to pick up such things as irregular heartbeats, abnormal blood pressure, problems with lung function, diminished flow in veins and arteries, and worrisome silence in the bowels. They can even quickly diagnose an enlarged liver.

Medical historian Jacalyn Duffin at Queen’s University in Ontario argues the stethoscope’s invention redefined medicine itself, changing much of it from vague shrugs and symptoms to specific pathologies. What was previously just a cough, for example, suddenly became tuberculosis due to the diagnostic power of the stethoscope, accompanied by new and increasingly effective treatments.

Randomized Controlled Trials

In 2013, the London-based Medical Research Council, one the world’s leading organizations for funding medical research, polled a group of physicians and leading thinkers as a way to mark its 100th anniversary. The question: What medical advance from the past century has made the greatest impact?

One particular answer came up multiple times — and it was surprising in that it was a tool, not a treatment: the randomized controlled trial. An RCT randomly assigns medical study participants into an experimental group or a control group.

Sir Simon Wessely, chair of Psychological Medicine at the Institute of Psychiatry at King’s College London, explained it this way: “It allows us to evaluate properly all the other discoveries and advances.” In other words, RCTs show us what works — and, just as importantly, what doesn’t work.

Amputation Kits

As weaponry has advanced over the long course of human warfare, so too has the magnitude of injuries they cause on the battlefield. Often called the first modern war because of its devastating new weapons, the American Civil War from 1861 to 1865 set a new standard for its sobering ability to inflict complex wounds in unparalleled numbers.

New rifles extended the range of a volley from the 50 to 100 yards of musket fire to more than 500 yards, with much-increased accuracy. Those rifles also fired newly invented minié balls, soft lead bullets that could shatter 2 to 3 inches of bone and embed such debris as filthy clothing into the wound, creating an extremely high infection rate. And with the discovery of penicillin more than 60 years away, soldiers died from infected wounds far more than from anything else.

The amputation saw had been around in one form or another since at least Roman times, when Aulus Cornelius Celsus, a Roman medical writer, described an amputation technique using a scalpel and bone saw. But it was the Civil War that turned amputation kits from little-used medical curiosities into indispensable life-saving tools.

Amputations, even in the unsanitary and rudimentary conditions of the time, turned complex injuries into simple ones by changing untreatable tattered wounds into manageable stumps. And there were more than 60,000 of them done — making up about three-quarters of all surgeries performed — saving more lives than any other wartime medical procedure.

All it took was a few minutes, a restraint team and a surgeon with an amputation kit containing scalpels, tenaculums to pull out and tie off arteries, and bone saws. Limbs were generally thrown into piles off to the side. One exception was that of the amputated right leg of Maj. Gen. Daniel Sickles, a Union commander struck by a 12-pound cannonball. He packaged it up in a small box and sent it to the Army Medical Museum with a card reading, “With the compliments of Major General D.E.S.” For years afterward, he visited his leg on the anniversary of its amputation.

Dialysis Machines

The ability to artificially maintain the function of a failing organ didn’t begin with the first kidney dialysis machine. Tools such as the iron lung saved thousands of polio victims’ lives when the disease caused respiratory failure.

But it was the invention of the first practical dialysis machine in the 1940s that showed doctors could replace the function of even the most complex organs almost indefinitely. Compared to the relatively simple lung, a kidney’s function is remarkably complex. And the iron lung, as well as its predecessor, the ventilator, are downright elementary in design compared with the large number of technologies that converge in a modern dialysis machine.

Most importantly, though, is the impact of dialysis. Over 430,000 Americans currently receive lifesaving dialysis, an increase of 57 percent since 2000, making it the most common organ replacement therapy device in use.

Condoms

Condoms (Credit: Stockbyte/Getty Images)

Stockbyte/Getty Images

The earliest evidence of condoms is found in a 12,000-year-old cave painting in Europe. Rubbers bounced around for the next few thousand years as a form of birth control, but it was only in 1494 that they truly became a medical device of extraordinary importance.

A massive outbreak of syphilis began in Europe, and soon spread to Asia, decimating the infected: “Its pustules often covered the body from the head to the knees, caused flesh to fall off people’s faces, and led to death within a few months,” according to one description.

Condom usage to prevent disease had begun in earnest. The first versions used such materials as oiled linen and silk, sheep intestines, goat bladders and thin sheaths of leather. It took until 1855 for the first rubber prophylactics to come on the market, using Charles Goodyear’s recently invented vulcanization process. Modern latex versions arrived in the 1920s.

As a transformative medical device, few can claim a role of such enormity as the condom in giving doctors a tool for preventing the scourges of STDs. For example, a report by the National Institutes of Health showed that correct and consistent use of condoms cuts the risk of contracting an HIV infection by 85 percent. Equally impressive numbers apply to chlamydia, syphilis, herpes, gonorrhea and others.

Transorbital Lobotomy Orbitoclasts

Transorbital lobotomy orbitoclast (Credit: Brian Kubasco/Museum Oddities)

Brian Kubasco/Museum Oddities

Two things warrant the inclusion of the transorbital lobotomy orbitoclast on any list of tools that most changed medicine: what it did and what it spawned.

Invented in 1946 by Walter Freeman, an American physicist, as a faster, easier way to perform a lobotomy, the orbitoclast was controversial from the start. Lobotomies surgically destroy connections and tissues in the brain’s prefrontal cortex to treat depression, panic disorders, schizophrenia and other manias.

Many in medicine already viewed lobotomies as barbaric. And Freeman’s orbitoclast made the procedure quick and easy. He would simply lift the upper eyelid away from the eyeball, insert the sharp point of the orbitoclast (essentially a modified ice pick), hammer it through the back of the socket, and pull it back and forth. The patients, sedated but still conscious, were usually sent home with sunglasses to hide any bruising.

The medical advance of the orbitoclast was unquestionable. It turned what had been major brain surgery into an outpatient procedure. Freeman, a fervent evangelizer of his invention, personally performed over 4,000 of the 60,000 lobotomies done in the U.S. and Europe between 1936 and 1956. One study showed that 63 percent of lobotomized patients saw improved symptoms, 23 percent had no improvement, and another 14 percent got worse or died. But the horror of the procedure was too much to allow that many neutral or negative results.

By the 1950s, the medical community and the general public wanted options. This is how the orbitoclast changed medicine again: It led to a search for options, spurring the psychiatric community to develop psychoactive drugs and new talk therapies.

Chlorpromazine to treat schizophrenia became available in the U.S. in 1955. The antipsychotic Haloperidol followed in the 1960s, marking the first wave in drug therapy.

Freeman used his cherished orbitoclast for the last time in 1967 on a longtime patient — a woman he had already twice lobotomized. She died of a cerebral hemorrhage, and he was banned from further operations.

Permanent Markers

Wrong-site surgeries happen a lot — researchers estimate about 20 times a week in the United States alone. That can lead to such disasters as a healthy breast being removed instead of a diseased one, or the wrong knee getting turned into titanium.

Fortunately, the Sharpie is mightier than the scalpel. “Wrong-site surgery is preventable by having the surgeon ... place his or her initials on the operative site using a permanent marking pen prior to the patient being moved to the location of the procedure and then operating through or adjacent to his or her initials,” says the American Academy of Orthopaedic Surgeons. One study estimated this simple scrawl could eliminate about 62 percent of wrong-site surgeries.

Toothbrushes

Toothbrushes (Credit: Stigur Karlsson/Getty Images)

Stigur Karlsson/Getty Images

More than 7,000 years ago, Sumerians laid the blame for dental decay at the feet of tooth worms (or, if you want to be anatomically accurate, the paired setae of tooth worms). Ever since, a series of teeth-cleaning methods came into sporadically popular use, including twigs with frayed ends, toothpicks, handles with boar bristles and the intoxicating practice of cleaning teeth with a sponge soaked in brandy.

Despite all this creativity, the main function of dentists and their surrogates over the centuries was to pull out the rotten teeth and, occasionally, replace them with false ones.

Then, in 1938, the company now called DuPont introduced Dr. West’s Miracle-Tuft toothbrush, the type of nylon-bristled brush you hold in your hand every day. And the toothbrush as a device that changed the course of medicine was born.

Soldiers in World War II used the newly cheap and effective brushes with regimented fervor and became aseptic ambassadors of oral hygiene upon their return to home shores. As a result, we now live in an age where people are keeping their teeth longer than ever before.

And that matters much more than for looking spiffy in a selfie. A burgeoning body of research shows a healthy mouth means a longer and better life. One recent study, which followed 5,611 older adults in California for nine years, found that never brushing at night increased the risk of death by 20 to 35 percent. Not flossing meant a 30 percent higher chance of dying during the study period. Losing all teeth, even if replaced with dentures, also gave a 30 percent higher risk. And the best way to avoid these brushes with death is through regular visits to Dr. West or his many descendants.

Ambulances

The first ambulances, created by Anglo-Saxons in A.D. 900, were simple in both purpose and design. The purpose was patient transport. The design was a hammock combined with a cart. The ambulance as a vehicle of change didn’t begin to get real traction until 1952, and it took the horrific Harrow and Wealdstone rail crash, England’s most catastrophic rail accident, to make it happen.

Public outrage was intense when people learned many of the 112 dead could have survived with faster treatment. In response, ambulances began shifting into a new role of mobile hospital.

Governments around the world began experimenting with the new heals-on-wheels model. In 1968, when the city of Jacksonville, Fla., implemented its first ambulance service capable of both on-site and in-transit emergency care, the effect was dramatic. Within three years, those who initially survived a car crash but then died from those injuries dropped by 24 percent.

Now called emergency medical services, or EMS, the training, methods and machines have continued to evolve. For example, one study showed that when helicopters staffed with a doctor and nurse transported trauma victims to a hospital, mortality was cut in half.

Defibrillators

Defibrillator (Credit: Stigur Karlsson/Getty Images)

Stigur Karlsson/Getty Images

The idea of restarting stopped hearts with electricity had been kicking around in medical circles since the 18th century, and even showed up as a way to animate the monster in Mary Shelley’s 1818 publication of Frankenstein. But it took until 1899 for two University of Geneva physiologists to demonstrate it on some unlucky Swiss dogs, whose exposed hearts were both stopped and restarted with shocks.

A 14-year-old patient of Claude Beck, a pioneering heart surgeon at Case Western Reserve University in Cleveland, fared a whole lot better. In 1947, the boy was the first human to be successfully revived with a defibrillator, one Beck designed.

Much has improved since those early days of the spread-sternum jump-and-jolt. By the 1950s, paddle electrodes provided shocks through the chest wall. In the 1960s, the units became portable enough to begin showing up in ambulances. By the early 1990s, ever-smaller automated external defibrillators (AEDs) were in police cars.

Today, millions of no-experience-necessary AEDs are publicly available, with 200,000 more being added each year in such locations as workplaces, airports, schools, shopping malls and churches. There are even $1,500 models for home use. The sophisticated devices actually talk people through the process of using them. And what a difference they’re making.

Studies show that if a bystander promptly uses an AED on someone in cardiac arrest, the chances of surviving doubles or triples. Now that’s a shock.

Medical Imaging

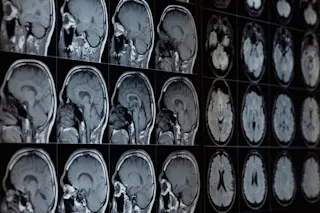

If stethoscopes transformed doctors’ ability to make diagnoses by the mere fact of listening to your innards, imagine the exponential advantage medical imaging provides by letting them see inside you.

Begat by Wilhelm Röntgen’s accidental yet Nobel Prize-winning discovery of the X-ray in 1895, medical imaging progressed to the first diagnostic ultrasounds of the 1940s, and then on to today’s sophisticated 3-D imaging from computed tomography (CT), magnetic resonance imaging (MRI) and positron emission tomography (PET).

It’s hard to overstate the impact these machines have had on patients: earlier diagnoses, faster and better treatment and a vastly reduced need for exploratory surgery. For example, exploratory surgeries to try to diagnose abdominal problems declined from 85,000 operations in 1993 to around 35,000 by 2006. That 60 percent reduction was echoed in exploratory lung surgeries over the same period.

Band-Aids

Band-Aid (Credit: SPWIDOFF/Shutterstock)

SPWIDOFF/Shutterstock

It may seem odd to consider anything adorned with Rugrats or Spider-Man as a medicine-changing tool, but don’t make the mistake of dismissing Band-Aids simply because they can be cute. Huge numbers of people see the world’s first self-adhesive bandages not just as temporary fixes but, rather, a device helping put the care into health care.

A caring cotton buyer for Johnson & Johnson (Band-Aids’ maker) invented them in 1920 so he could tend to the many cuts and minor burns his beloved and accident-prone wife got while cooking and keeping house.

By 1942, millions of Johnson & Johnson’s adhesive bandages accompanied World War II soldiers overseas. In 1963, Mercury astronauts took them into space. Back here on Earth, Band-Aids became the go-to therapy for parents wanting to make boo-boos better.

They act as medals for bravery in the face of inoculations, they hide the tear-inducing sight of a skinned knee, and they actually do help minor wounds heal better with fewer scars and infections. Turns out, we really are stuck on Band-Aids. By 2001, the number manufactured had rocketed past 100 billion.