This story was originally published in our May/June 2022 issue as "Hope for Holograms." Click here to subscribe to read more stories like this one.

"Help me Obi-Wan Kenobi. You’re my only hope,” pleads a pale, flickering image of Princess Leia, projected from the faithful droid R2-D2. In an iconic scene from the first Star Wars film (1977), heroes Kenobi and Luke Skywalker are transfixed by the three-dimensional display, which materializes before them as if a miniature Leia were present in the room.

For sci-fi fans, the term hologram often evokes images of floating projections that present characters with interactive intel to help them navigate a futuristic world. Different iterations of the concept have emerged on screen in recent years, seen in everything from superhero flicks like Iron Man to the Netflix series Black Mirror. In literature, they cropped up as early as Isaac Asimov’s 1942 story, “Foundation,” in which a character’s pre-recorded video messages are played as 3D projections.

While existing technologies for projecting artificial images onto real-world environments — from head-mounted devices to stage-size projector systems — can create compelling 3D experiences, they generally fall short of the interactive, free-floating images envisioned by filmmakers and futurists. Nevertheless, researchers and tech companies are currently developing display systems they hope will someday deliver on science fiction’s promise.

Spoiler alert: Today’s solutions aren’t technically holograms. That’s because conventional holography involves recording a three-dimensional image on a two-dimensional medium.

Originating as ghostly red- or green-tinted pictures of inanimate objects, holograms are often used nowadays as security features — think of the shiny labels on credit cards or the iridescent watermarks on various IDs, for example. In its early years, holography was generally accomplished by splitting a laser beam in two, then shining one beam at an object before recombining the beams, creating an interference pattern that was imprinted on a light-sensitive film. Hungarian physicist Dennis Gabor developed the technique in the 1940s using an electron beam, more than a decade before the advent of the laser.

But, because traditional holograms must be viewed via flat surfaces like credit cards or photographic plates, creating a Leia-like image in the open air would be impossible using conventional holography, says Daniel Smalley, an associate professor of electrical and computer engineering at Brigham Young University (BYU). Instead, devices that produce aerial 3D graphics, in which light-scattering image points are distributed in space, are generally categorized as volumetric displays. “Instead of looking at them like a television set, you can look at them like a water fountain, where you can walk around it and you don’t have to be gazing toward the screen to see that imagery,” Smalley says.

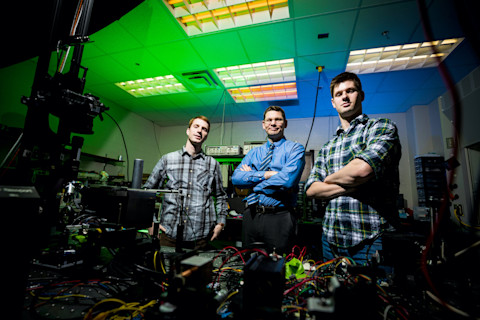

Engineers at Brigham Young University are developing volumetric displays in which icons and images appear to float in mid-air. (Credit: Courtesy of Dan Smalley Lab/BYU)

Courtesy of Dan Smalley Lab/BYU

Although these aerial imaging systems are still in the early stages of development, Smalley and other technologists envision a not-so-distant future in which free-floating 3D projections are widely used for video chats, medical imaging and emergency displays.

aiming to reproduce a Leia-like image in the real world, Smalley’s team at BYU pioneered a technique that pairs a laser with a dust-sized particle of cellulose to produce images that appear to float.

A laser beam containing pockets of low energy, or “holes,” can be used to trap the particle and drag it through the air, Smalley says. By illuminating the particle with red, green and blue beams as it streaks along a predetermined path, the system renders colorful graphics in mid-air. The image is redrawn at least 10 times per second, creating the illusion of a cohesive image. In other words, “we move the sparkler around fast enough that it looks like a continuous line,” Smalley says.

The team has produced thumbnail-size images of a butterfly, a spacecraft, and even a Princess Leia look-alike. Smalley says the system could be scaled up by trapping and scanning many particles at once. Plus, the images themselves could be made to look more solid by controlling how the particles reflect light in certain directions. By governing how light is scattered at each image point, the technology could also present multiple viewers different experiences depending on their perspective, he says. “Every person in the room looking at the same space could see something totally different,” Smalley says. He adds that a sufficiently advanced device could someday enable, say, a child to view his homework as a projection — while one parent makes a video call and the other plays a game in the same space.

And while other techniques using lasers — as well as some that use sound waves — offer intriguing possibilities, producing an image requires a physical piece of matter to be illuminated. A team of researchers across the Pacific has taken the sci-fi concept a step further, creating aerial images using only light.

Picture this: You’re asleep on a Saturday morning when the sound of an alarm clock erupts from your device. You turn over groggily to see the word SNOOZE projected in the air above your device. You reach out and touch the virtual button with your finger — a slight sensation tickling your fingertip — before drifting back to sleep.

Researchers from the University of Tsukuba in Japan are working on a technology that could make this scenario possible. Led by associate professor Yoichi Ochiai, the team creates graphics in the air using a laser that emits ultra-short pulses of light. The high-intensity beam can break down air molecules through ionization, which produces short-lived specks of light, or plasma. The system renders images by rapidly adjusting the focal point of the laser in three dimensions, generating thousands of emission points, or voxels, per second.

A previous version of the technology used nanosecond laser pulses, which have the unfortunate side effect of burning human skin. Ochiai’s setup, nicknamed Fairy Lights, employs much shorter femtosecond laser pulses that are much less dangerous despite having a high peak intensity, Ochiai says. The tiny hearts, stars and fairies that the system projects are not only safe to touch, but they are responsive to contact. In one demonstration, the tabletop system projects a checkbox that can be filled in with your finger. “It feels kind of like sandpaper or an electrostatic shock,” Ochiai says.

Along with applications in augmented reality — such as a floating keyboard — the system’s haptic properties could be used to create a kind of aerial Braille, Ochiai says. He also envisions large-scale emergency displays that could be projected high over a city to warn residents about a natural disaster or direct them toward an escape route. While the initial images were no larger than a cubic centimeter, Ochiai says the scalability of the system depends on the size and power of the optical equipment. Large systems are currently cost-prohibitive, he says, adding that technologies that draw images using plasma will likely become more feasible over the next 10 to 20 years as the multi-million-dollar price tag decreases.

Daniel Smalley at BYU (center) and students Erich Nygaard (left) and Wesley Rogers (right) stand behind a laser table where they develop new technology for volumetric displays. (Credit: Courtesy Nate Edwards/BYU)

Courtesy Nate Edwards/BYU

While laser-based approaches are in their infancy, research groups and private companies alike have developed various screen-based displays for generating 3D images. Many of them have been marketed as holograms, though they usually rely on other technologies, such as polarized glasses, near-eye displays or stacked LCD screens.

IKIN, a startup based in San Diego, is working to create three-dimensional displays through a new device that attaches to smartphones. Known as the RYZ (pronounced “rise”), the accessory features a patented lens, explains founder and Chief Technology Officer Taylor Scott. To a viewer looking through the portal, full-color objects appear against the real-world background.

Leveraging advanced AI algorithms, the device keys in on a user’s eyes and other cues not only to simulate stereoscopic depth but also to merge the photons coming from the background environment with the light being projected to the user’s retinas. This “additive processing” allows the system to continuously adjust an image in real time to compensate for the brightness and color of objects behind the image, says Scott.

“We track the environment around you and use that light that’s coming toward your eyes already and add to it to create the image that we want,” Scott says, adding that the device can be used in broad daylight, unlike most goggle-based systems and stage productions. Users can manipulate the high-resolution depth fields by touching the glasslike window, and multiple users can view different perspectives of the images depending on their position relative to the device.

Scott foresees a litany of applications for the tech, ranging from immersive video communications to advanced medical imaging techniques, such as brain scans and echocardiograms. Software developers will be able to create content for the device, while the RYZ app will enable users to play games and convert their existing digital images and videos into depth fields. “We’re providing consumers the ability to take all of their memories and relive them in a hologram,” Scott says.

Although the laws of physics will likely prevent technologists from generating Leia-like projections anytime soon, advances in volumetric display technologies are sure to bring increasingly compelling approaches into the mainstream.

For example, you might see this technology used in video games. And while IKIN has made a concerted effort to not get pigeonholed into the gaming space, video games are in the startup’s DNA. Scott is an avid gamer; in fact, the impetus for the display device was a longing to immerse himself in the world of one of his favorite console games. “The whole reason I invented this system is because I wanted to play Legend of Zelda in a hologram,” Scott says. “I love Zelda.”